Intel’s goal is to support all artificial

intelligence models, including generative AI, with responsible

perspectives and principles.

The following is an opinion editorial by Ilke Demir of Intel

Corporation:

Generative artificial intelligence (AI) describes the algorithms

used to create new data that can resemble human-generated content,

including audio, code, images, text, simulations and videos. This

technology is trained with existing content and data, creating the

potential for applications like natural language processing,

computer vision, metaverse and speech synthesis.

Generative AI is not new. It’s the tech that created voice

assistants, infinitely evolving games, and chatbots.

Recently, several powerful AI tools, such as ChatGPT and DALL·E

2, have been used as generative AI, enabling people to build apps

on them, and to experiment and post the results online. You may

have seen one in action when social profiles were flooded with

historically inspired photos from the MyHeritage AI Time Machine,

which uses AI to generate hypothetical pictures of a person’s

appearance if they lived in different eras. While some have shared

concerns about the potential of generative AI to threaten jobs,

there is a greater opportunity to responsibly use generative AI to

improve people’s efficiency and creativity.

Intel’s Trusted Media team works to build generative AI

applications with humans in mind. The team strives to create AI

that improves people’s lives, limits harm and builds tools to make

other technologies more natural. And it does it all with

responsibility at each step of the process, not just the end.

Intel’s Approach to Generative AI

In the past few years, generative AI has become more powerful –

and therefore more capable of doing problematic things in a more

convincing and realistic manner.

For example, generative models for deepfakes aim to impersonate

people. We defend against this in two ways. The first is with our

deepfake detection algorithms integrated into our real-time

platform. FakeCatcher, the core of the system, can detect fake

videos with a 96% accuracy rate, enabling users to distinguish

between real and fake content in real time. The second is through

our responsible generators, one of which makes human puzzles. As

opposed to creating images by training on real people’s faces, this

generator mixes and matches regions (nose of person A, mouth of

person B, eyes of person C, etc.) to create an entirely new face

that does not already exist in a data set.

We believe that AI should not only prevent harm but also enhance

lives. To fulfill this vision, the team’s speech synthesis project

aims to enable people who have lost their voices to talk again.

This technology is used in Intel’s I Will Always Be Me digital

storybook project in partnership with Dell Technologies,

Rolls-Royce and the Motor Neuron Disease (MND) Association. The

interactive website allows anyone diagnosed with MND or any disease

expected to affect their speaking ability to record their voice to

be used on an assistive speech device.

Finally, the Trusted Media research team is also working on

using generative AI to make 3D experiences more realistic. For

example, Intel’s CARLA is an open source urban driving simulator

developed to support the development, training and validation of

autonomous driving systems. Using generative AI, the scenes

surrounding the driver would look more realistic and natural.

Intel’s generative AI approaches also simplify 3D creation and

rendering workflows, saving hours for 3D artists and making games

run much more quickly.

The Role of Responsible AI

We understand that we cannot trust generative AI results without

understanding the process by which these systems work. Intel has

long recognized the importance of the ethical and societal

implications associated with the deployment of such technological

advancements. This is especially true for AI applications as we

remain committed to leveraging the best methods, principles and

tools to ensure responsible practices in every step of our product

cycles. As generative AI evolves, it is critical that humans remain

at the center of this work. Responsible AI begins with the design

and development of systems, not with cleaning up after they are

deployed.

It’s important to train AI systems so they do not generate

harmful material. This is where our Responsible AI framework is

important. We improve safety and security by conducting rigorous,

multidisciplinary review processes throughout the development

lifecycle. This includes establishing diverse development teams to

reduce biases, developing AI models that follow ethical principles,

extensively evaluating our results from both technical and human

perspectives and collaborating with industry partners to mitigate

potentially harmful uses of AI.

This framework ensures we are finding ways to positively

implement this technology and keep people in control of how they

can best leverage AI. A multidisciplinary approach allows us to

deepen our knowledge and focus to tap into opportunities in the

thriving AI sector while working within established ethical, moral

and privacy parameters.

What’s Next

Our goal is to support all AI models, including generative AI,

with responsible perspectives and principles. Ensuring a human

perspective is present at all phases of development allows this

technology to be more applicable, collaborative and less

harmful.

I look forward to seeing how the generative AI momentum grows,

and to partnering closely with my team at Intel and across the

industry to ensure its responsible advancement.

Ilke Demir is a senior staff research scientist in Intel

Labs.

About Intel

Intel (Nasdaq: INTC) is an industry leader, creating

world-changing technology that enables global progress and enriches

lives. Inspired by Moore’s Law, we continuously work to advance the

design and manufacturing of semiconductors to help address our

customers’ greatest challenges. By embedding intelligence in the

cloud, network, edge and every kind of computing device, we unleash

the potential of data to transform business and society for the

better. To learn more about Intel’s innovations, go to

newsroom.intel.com and intel.com.

© Intel Corporation. Intel, the Intel logo and other Intel marks

are trademarks of Intel Corporation or its subsidiaries. Other

names and brands may be claimed as the property of others.

View source

version on businesswire.com: https://www.businesswire.com/news/home/20230208005328/en/

Orly Shapiro 1-949-231-0897 orly.shapiro@intel.com

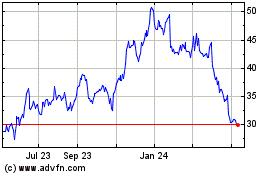

Intel (NASDAQ:INTC)

Historical Stock Chart

From Jun 2024 to Jul 2024

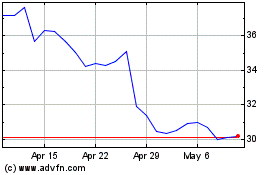

Intel (NASDAQ:INTC)

Historical Stock Chart

From Jul 2023 to Jul 2024