By Rob Copeland and Parmy Olson

Alphabet Inc. Chief Executive Sundar Pichai has bet big on

artificial intelligence as central to the company's future,

investing billions of dollars to embed the technology in the

conglomerate's disparate divisions. Now, it is one of his trickiest

management challenges.

Over the past 18 months, Google's parent has waded through one

controversy after another involving its top researchers and

executives in the field.

In the most high-profile incident, last month Google parted ways

with a prominent AI researcher, Timnit Gebru, after she turned in

studies critical of the company's approach to AI and complained to

colleagues about its diversity efforts. Her research findings

concluded that Google wasn't careful enough in deploying such

powerful technology and was callous about the environmental impact

of building supercomputers.

Mr. Pichai pledged an investigation into the circumstances

around her departure and said he would seek to restore trust. Ms.

Gebru's boss, Jeff Dean, told employees that he determined her

research was insufficiently rigorous.

Nearly 2,700 Google employees have since signed a public letter

that says Ms. Gebru's departure "heralds danger for people working

for ethical and just AI -- especially Black people and People of

Color -- across Google."

Last week, Google said it was investigating the company's

co-head of ethical AI, Margaret Mitchell, for allegedly downloading

and sharing internal documents with people outside the company. Ms.

Mitchell, who has criticized Mr. Pichai on Twitter for his handling

of diversity issues, didn't respond to requests for comment.

Alphabet's approach to AI is closely watched because the

conglomerate is widely seen as the industry leader in sponsoring

research -- both internal and external -- and developing new

applications for the technology, ranging from smart speakers to

virtual assistants. The nascent field has raised complex questions

about the growing influence of computer algorithms in a wide range

of public and private life.

Google has sought to position itself as a standard-bearer for

ethical AI. "History is full of examples about how technology's

virtues aren't guaranteed," Mr. Pichai said in remarks to a

Brussels think tank last week. "While AI promises enormous benefits

for Europe and the world, there are real concerns about the

potential negative consequences."

Google's push to advance in AI through acquisition has also

added to its management challenges. In a previously unreported

move, Mustafa Suleyman, co-founder of Google's London-based

artificial-intelligence arm, DeepMind, was stripped in late 2019 of

most management responsibilities after complaints that he bullied

staff, according to people familiar with the matter.

DeepMind, which Google bought in 2014, hired an outside law firm

to conduct an independent probe into the complaints. At the end of

2019, Mr. Suleyman was moved to a different executive role within

the AI team at Google.

DeepMind and Google, in a joint statement, confirmed the

investigation into Mr. Suleyman's behavior and declined to say what

it found. The statement said that as a result of the probe Mr.

Suleyman "undertook professional development training to address

areas of concern, which continues, and is not managing large

teams." The companies said that in Mr. Suleyman's current Google

role, as vice president of artificial-intelligence policy, "he

makes valued contributions on AI policy and regulation."

In response to questions from The Wall Street Journal, Mr.

Suleyman said that he "accepted feedback that, as a co-founder at

DeepMind, I drove people too hard and at times my management style

was not constructive." He added, "I apologize unequivocally to

those who were affected."

Mr. Suleyman's appointment to a high-profile role at Google

bothered some DeepMind staff, as did a tweet from Mr. Dean

welcoming him to his new role at Google, according to current and

former employees.

"I'm looking forward to working with you more closely," Mr. Dean

wrote in the tweet.

Unlike in Google search, in which artificial intelligence is

employed to reorganize and resurface existing public information,

DeepMind has focused largely on health issues, such as crunching

vast amounts of patient data to figure out new ways to treat

disease.

DeepMind has struggled to meet financial expectations. The unit

had a loss of $649 million in 2019, according to a U.K. filing last

month. Google forgave more than $1 billion of loans to the unit

over the same period, the filing indicates.

Google has tried at various times to construct broader oversight

of its artificial-intelligence projects, with mixed success.

A DeepMind independent review board, meant to scrutinize the

unit and produce a public annual report, disbanded in late 2018

after board members chafed at what they said was incomplete access

to DeepMind's research and strategic plans.

Months later, an external AI ethics council created by Google

was disbanded after a week, following an employee petition and

other protests about right-leaning board members. A Google

spokesman said at the time that the company would "find different

ways of getting outside opinions on these topics."

Google's artificial-intelligence efforts date back at least a

decade, and the area has been a stand-alone division under Mr. Dean

since 2017. Mr. Pichai, in announcing the new structure that year,

said that AI would be central to the company's strategy and

operations, with advanced computing -- also called machine learning

-- threaded throughout the company.

University of Washington computer-science professor Pedro

Domingos said Google has been well aware of the many pitfalls

associated with AI. He remembers Alphabet board chairman John

Hennessy in a chat several years ago describing his biggest fears

as the search giant intensified its push in the field.

Mr. Domingos says Mr. Hennessy told him that if anything went

wrong with AI -- even at other companies -- Google would be

blamed.

"We are one misstep away from everything blowing up in our

faces," Mr. Hennessy said, according to Mr. Domingos. Mr. Hennessy

said he doesn't recall the specifics of the conversation but said

Mr. Domingos's recollection is probably right.

One early misstep came in 2015, when several Black Google users

were surprised to see that the artificial-intelligence technology

in the company's free photography software had automatically

labeled one of their albums of human photos, "gorilla," based on

skin color.

Google apologized and fixed the problem, and publicly redoubled

promises to build internal safeguards to ensure its software was

programmed ethically.

The effort involved hiring researchers such as Ms. Gebru, the

former co-head of the company's Ethical Artificial Intelligence

team, who has been outspoken on the limitations of

facial-recognition software in identifying darker-skinned

individuals.

She was one of hundreds of employees who produced research,

sometimes with academic institutions, on AI. Unlike Google's other

engineers, the AI group functioned more like an academic department

-- tasked with debating larger issues rather than troubleshooting

products.

Reuters later reported that before Ms. Gebru's departure, Google

had launched a review of sensitive topics and in at least three

cases asked staff not to cast its technology in a negative light.

Google declined to comment on that.

Ms. Gebru and Mr. Dean didn't respond to requests for comment

for this article.

Mr. Domingos said Google's artificial-intelligence advancements

have helped create faster and more accurate search results -- as

well as more relevant advertising.

Mr. Domingos said most of Google's problems related to AI are

rooted in the company's approach to managing staff, adding that

science, and not ideology, should guide ethical debates.

"Google is the coddler-in-chief," he said. "Their employees are

so coddled that they feel entitled to make more and more demands"

regarding how the company approaches AI and related issues.

Ms. Gebru's departure highlights the challenge Google leadership

faces, as the two sides can't even agree on whether she resigned,

as the company says, or was fired during her vacation, as she has

said.

Write to Rob Copeland at rob.copeland@wsj.com and Parmy Olson at

parmy.olson@wsj.com

(END) Dow Jones Newswires

January 26, 2021 11:17 ET (16:17 GMT)

Copyright (c) 2021 Dow Jones & Company, Inc.

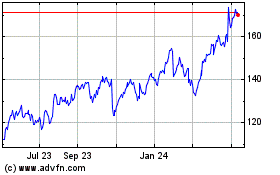

Alphabet (NASDAQ:GOOG)

Historical Stock Chart

From Jun 2024 to Jul 2024

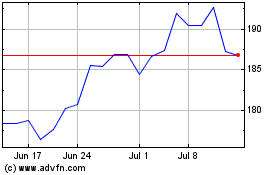

Alphabet (NASDAQ:GOOG)

Historical Stock Chart

From Jul 2023 to Jul 2024