How Adobe's Ethics Committee Helps Manage AI Bias

May 05 2021 - 9:16PM

Dow Jones News

By Jared Council

Review boards can help companies mitigate some of the risks

associated with using artificial intelligence, according to Adobe

Inc. executive Dana Rao.

Mr. Rao, Adobe's general counsel, said one of the top risks in

using AI systems is that the technology can perpetuate harmful bias

against certain demographics, based on what it learns from data.

Ethics committees can be one way of managing those risks and

putting organizational values into practice.

Adobe's AI ethics committee, launched two years ago, has been

able to review new features for potential bias before those

features are deployed, Mr. Rao said Wednesday at The Wall Street

Journal's Risk & Compliance Forum. The committee is made up of

employees of various ethnicities and genders from different parts

of the company, including legal, government relations and

marketing.

"It takes a lot of people across your company to help figure

this out," he said. "Sometimes we might look at it and say there's

not an issue here," he said, but getting a diverse group of people

together can help identify issues product developers might

miss.

A feature designed to detect unauthorized purchases of Abode

software, for instance, could inadvertently learn to block

customers of a certain demographic, he said.

Mr. Rao said the AI ethics committee recently reviewed a

fraud-detection feature that could potentially discriminate against

certain groups.

"[AI] can learn from last names and geographies and make a

connection that...there's a lot more credit card fraud coming from

Brazil," Mr. Rao said. "And it may not just stop people from Brazil

coming in, which would be bad. It might stop people with Brazilian

names from [purchasing] the software."

He said the committee directed the team proposing the feature to

conduct further testing.

Mr. Rao said an AI ethics assessment is one mechanism for

identifying which features Adobe's ethics committee should review.

This is a form product developers fill out that spells out what the

AI feature will do and how it works.

If the assessment review shows no major ethical AI risks, such

as an AI tool that recommends text font, then the team behind it

doesn't need to present the feature to the board for review, he

said.

It's also important for the review board to be made up of

diverse voices, he said.

"We've had examples where some African-American members on our

committee have spotted issues in an imaging AI filter that no one

else did, because it affected only people with Black skin or their

hair specifically and no one else would have gotten the issue."

Write to Jared Council at jared.council@wsj.com

(END) Dow Jones Newswires

May 05, 2021 21:01 ET (01:01 GMT)

Copyright (c) 2021 Dow Jones & Company, Inc.

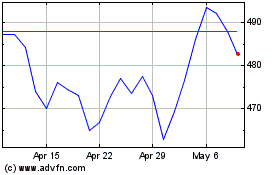

Adobe (NASDAQ:ADBE)

Historical Stock Chart

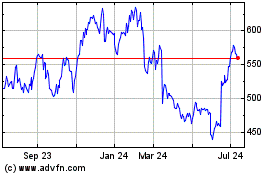

From Aug 2024 to Sep 2024

Adobe (NASDAQ:ADBE)

Historical Stock Chart

From Sep 2023 to Sep 2024