By Steven Rosenbush

As babies drop spoons and cups from their high-chairs, they come

to understand the concept of gravity. To a parent, it might seem

like the process takes forever, but babies typically grasp the idea

in a few months.

Algorithms require much more data and time to learn much

narrower lessons. A handful of scientists are pushing the furthest

limits of artificial intelligence by training it to better learn by

itself, more like a baby.

"This is the single most important problem to solve in AI

today," says Yann LeCun, chief artificial intelligence scientist at

Facebook Inc.

It is a Manhattan Project-like effort that will go on for years,

if not decades. At Facebook, Alphabet Inc.'s Google and other

companies and universities around the world, scientists are working

to create better AI that learns through self-supervision, teaching

itself about the world the way people do. The immediate goal is

broader AI that can perform multiple tasks, but that could one day

lead to artificial general intelligence, or machines with humanlike

thinking.

Scientists have had some early success with self-supervised

learning, especially in areas such as natural-language processing

used in mobile phones, smart speakers and customer-service

bots.

While there is no assurance of success, ongoing innovations

could help unlock applications from the creation of fully

autonomous vehicles to virtual tutors for school children, more

effective medical-imaging analysis and the real-time identification

of hate speech on Facebook, according to Dr. LeCun.

Today, training AI is time-consuming and expensive, Dr. LeCun

says, and for all that effort it can't comprehend concepts such as

gravity. You might be able to teach today's AI about the dangers of

driving a car too close to a cliff, he says, "but you would have to

crash thousands of times."

In self-supervised learning, AI can train itself without the

need for external labels attached to the data. It doesn't need to

be told "this is a cat" to identify other images of cats, or to

distinguish between images of "cats" and "chairs."

Dr. LeCun is now focused on applying self-supervised learning to

a more complex problem, computer vision, in which computers

interpret images such as a person's face.

The next phase, possible over the next decade or two, is to try

to create a machine that can "learn how the world works by watching

video, listening to audio and reading text," he says.

Dr. LeCun, who shared the 2018 A.M. Turing Award for his work on

deep learning, joined Facebook in 2013.

"Yann's a visionary," says Kyunghyun Cho, a professor of

computer science and data science at New York University's Courant

Institute of Mathematical Sciences, where Dr. LeCun also is

affiliated.

The push for self-supervised learning is a high priority at

Facebook, which is under pressure from lawmakers, outside groups

and its own users to crack down harder on misinformation and hate

speech.

An audit commissioned by Facebook, made public in July, found

the company had not done enough to police hate speech and other

problematic content on its platform, despite investments in

AI-based censors and teams of human analysts trained to track down

and remove harmful content.

Self-supervised learning, which can strengthen AI-based filters,

is "very important" to detect hate speech in hundreds of languages,

Dr. LeCun says. "You can't wait for users to flag hate speech. You

have to take it down before anyone sees it," he says.

Not all research is focused on self-supervised learning. Another

important approach is called neuro-symbolic, which combines two

techniques, deep learning and symbolic AI. Using this

neuro-symbolic approach, International Business Machines Corp. is

at work on a technology that extends AI's strength in interpreting

human language to machine language. An AI bot works alongside human

engineers, reading computer logs to spot a system failure,

understand why the system crashed, and offer a remedy. It also can

be used to help people write software code, suggesting ideas much

as a spell-checker might.

Broader AI could, in time, increase the pace of scientific

discovery, given its potential to spot patterns not otherwise

evident, says Dario Gil, director of IBM Research. "It would help

us address huge problems, such as climate change and developing

vaccines."

Until now, the best-performing self-supervised learning has

relied on "contrastive learning," or using examples of what a thing

is not to train the system to recognize the thing itself, according

to Michal Valko, a machine learning scientist at DeepMind, a

U.K.-based Google division focused on AI. A few images of a dog

might be included in a data set of cat images to better illustrate

what a cat is. The so-called negative examples were thought to

improve the performance of the system but could introduce errors,

Dr. Valko says.

A promising idea in self-supervised learning emerged in June,

when Dr. Valko and others at DeepMind published a paper outlining a

new approach. The DeepMind research showed that these negative

examples could be eliminated.

"By doing so we improve the performance, make the method more

robust, and possibly increase the applicability of the method," he

says.

For AI to model and navigate the surrounding world, it will also

be important for AI to go beyond predicting the next item in a

sequence, one at a time. Right now, natural-language processing,

for example, predicts the next word in a sequence by probability.

Ultimately, AI that learns in a self-supervised way would be able

to predict a sequence of events in an arbitrary order and skip

unimportant steps -- much like humans learn to go to the store

without having to perform each step of the process in the same

order every time, says NYU's Dr. Cho.

The ability to make such nonlinear language predictions is

closely related to making longer-term predictions in the physical

world, he says. "We know how to develop a car that can drive by

itself and stay in the lane," he says. But there are unsolved

higher-level problems associated with autonomous vehicles where

self-learning AI can play a role.

"Instead of saying, how do I change the steering wheel moment to

moment, I can just say, 'I need to go to the store,'" Dr. Cho

says.

More in The Future of Everything | Artificial Intelligence

The Next Time You Fly, AI Could Help Ease the Journey

Pack a self-driving suitcase, clear security through an archway

and board a pilotless plane: It's a far-out vision of the future,

but tech companies are working on making it a reality.

How a 30-Ton Robot Could Help Crops Withstand Climate Change

What makes a plant thrive in the heat? In the Arizona desert,

the 'Field Scanalyzer' is collecting data to learn the answer --

and hopefully improve farming for biofuels and food.

AI Can Almost Write Like a Human -- and More Advances Are

Coming

A new language model, OpenAI's GPT-3, is making waves for its

ability to mimic writing, but it falls short on common sense. Some

experts think an emerging technique called neuro-symbolic AI is the

answer.

The Changes AI Will Bring

More efficient criminal justice, 'fancy' digital assistants and

a potential catastrophe in the stock market: Six experts weigh in

on the biggest challenges -- and opportunities -- of artificial

intelligence.

Write to Steven Rosenbush at steven.rosenbush@wsj.com

(END) Dow Jones Newswires

August 13, 2020 12:14 ET (16:14 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

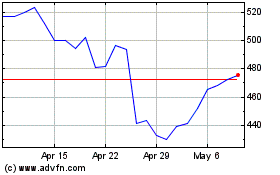

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Aug 2024 to Sep 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Sep 2023 to Sep 2024