Intel Introduces First-of-Its-Kind

Self-Learning Chip: Loihi Neuromorphic Test Chip

The following is an opinion editorial provided by Dr. Michael

Mayberry, corporate vice president and managing director of Intel

Labs at Intel Corporation.

This Smart News Release features multimedia.

View the full release here:

http://www.businesswire.com/news/home/20170925005364/en/

Intel introduces the Loihi test chip, a

first-of-its-kind self-learning neuromorphic chip that mimics how

the brain functions by learning to operate based on various modes

of feedback from the environment. Announced on Sept. 25, 2017, the

extremely energy-efficient chip uses data to learn and make

inferences, gets smarter over time and takes a novel approach to

computing via asynchronous spiking. (Credit: Intel Corporation)

Imagine a future where complex decisions could be made faster

and adapt over time. Where societal and industrial problems can be

autonomously solved using learned experiences.

It’s a future where first responders using image-recognition

applications can analyze streetlight camera images and quickly

solve missing or abducted person reports.

It’s a future where stoplights automatically adjust their timing

to sync with the flow of traffic, reducing gridlock and optimizing

starts and stops.

It’s a future where robots are more autonomous and performance

efficiency is dramatically increased.

An increasing need for collection, analysis and decision-making

from highly dynamic and unstructured natural data is driving demand

for compute that may outpace both classic CPU and GPU

architectures. To keep pace with the evolution of technology and to

drive computing beyond PCs and servers, Intel has been working for

the past six years on specialized architectures that can accelerate

classic compute platforms. Intel has also recently advanced

investments and R&D in artificial intelligence (AI) and

neuromorphic computing.

Press Kit: Artificial Intelligence

Our work in neuromorphic computing builds on decades of research

and collaboration that started with CalTech professor Carver Mead,

who was known for his foundational work in semiconductor design.

The combination of chip expertise, physics and biology yielded an

environment for new ideas. The ideas were simple but revolutionary:

comparing machines with the human brain. The field of study

continues to be highly collaborative and supportive of furthering

the science.

As part of an effort within Intel Labs, Intel has developed a

first-of-its-kind self-learning neuromorphic chip – the Loihi test

chip – that mimics how the brain functions by learning to operate

based on various modes of feedback from the environment. This

extremely energy-efficient chip, which uses the data to learn and

make inferences, gets smarter over time and does not need to be

trained in the traditional way. It takes a novel approach to

computing via asynchronous spiking.

We believe AI is in its infancy and more architectures and

methods -- like Loihi -- will continue emerging that raise the bar

for AI. Neuromorphic computing draws inspiration from our current

understanding of the brain’s architecture and its associated

computations. The brain’s neural networks relay information with

pulses or spikes, modulate the synaptic strengths or weight of the

interconnections based on timing of these spikes, and store these

changes locally at the interconnections. Intelligent behaviors

emerge from the cooperative and competitive interactions between

multiple regions within the brain’s neural networks and its

environment.

Machine learning models such as deep learning have made

tremendous recent advancements by using extensive training datasets

to recognize objects and events. However, unless their training

sets have specifically accounted for a particular element,

situation or circumstance, these machine learning systems do not

generalize well.

The potential benefits from self-learning chips are limitless.

One example provides a person’s heartbeat reading under various

conditions – after jogging, following a meal or before going to bed

– to a neuromorphic-based system that parses the data to determine

a “normal” heartbeat. The system can then continuously monitor

incoming heart data in order to flag patterns that do not match the

“normal” pattern. The system could be personalized for any

user.

This type of logic could also be applied to other use cases,

like cybersecurity where an abnormality or difference in data

streams could identify a breach or a hack since the system has

learned the “normal” under various contexts.

Introducing the Intel Loihi test chip

The Intel Loihi research test chip includes digital circuits

that mimic the brain’s basic mechanics, making machine learning

faster and more efficient while requiring lower compute power.

Neuromorphic chip models draw inspiration from how neurons

communicate and learn, using spikes and plastic synapses that can

be modulated based on timing. This could help computers

self-organize and make decisions based on patterns and

associations.

The Intel Loihi test chip offers highly flexible on-chip

learning and combines training and inference on a single chip. This

allows machines to be autonomous and to adapt in real time instead

of waiting for the next update from the cloud. Researchers have

demonstrated learning at a rate that is a 1 million times

improvement compared with other typical spiking neural nets as

measured by total operations to achieve a given accuracy when

solving MNIST digit recognition problems. Compared to technologies

such as convolutional neural networks and deep learning neural

networks, the Intel Loihi test chip uses many fewer resources on

the same task.

The self-learning capabilities prototyped by this test chip have

enormous potential to improve automotive and industrial

applications as well as personal robotics – any application that

would benefit from autonomous operation and continuous learning in

an unstructured environment. For example, recognizing the movement

of a car or bike.

Further, it is up to 1,000 times more energy-efficient than

general purpose computing required for typical training

systems.

In the first half of 2018, the Intel Loihi test chip will be

shared with leading university and research institutions with a

focus on advancing AI.

Additional Highlights

The Loihi test chip’s features include:

- Fully asynchronous neuromorphic many core mesh that supports a

wide range of sparse, hierarchical and recurrent neural network

topologies with each neuron capable of communicating with thousands

of other neurons.

- Each neuromorphic core includes a learning engine that can be

programmed to adapt network parameters during operation, supporting

supervised, unsupervised, reinforcement and other learning

paradigms.

- Fabrication on Intel’s 14 nm process technology.

- A total of 130,000 neurons and 130 million synapses.

- Development and testing of several algorithms with high

algorithmic efficiency for problems including path planning,

constraint satisfaction, sparse coding, dictionary learning, and

dynamic pattern learning and adaptation.

What’s next?

Spurred by advances in computing and algorithmic innovation, the

transformative power of AI is expected to impact society on a

spectacular scale. Today, we at Intel are applying our strength in

driving Moore’s Law and manufacturing leadership to bring to market

a broad range of products — Intel® Xeon® processors, Intel®

Nervana™ technology, Intel Movidius™ technology and Intel FPGAs —

that address the unique requirements of AI workloads from the edge

to the data center and cloud.

Both general purpose compute and custom hardware and software

come into play at all scales. The Intel® Xeon Phi™ processor,

widely used in scientific computing, has generated some of the

world’s biggest models to interpret large-scale scientific

problems, and the Movidius Neural Compute Stick is an example of a

1-watt deployment of previously trained models.

As AI workloads grow more diverse and complex, they will test

the limits of today’s dominant compute architectures and

precipitate new disruptive approaches. Looking to the future, Intel

believes that neuromorphic computing offers a way to provide

exascale performance in a construct inspired by how the brain

works.

I hope you will follow the exciting milestones coming from Intel

Labs in the next few months as we bring concepts like neuromorphic

computing to the mainstream in order to support the world’s economy

for the next 50 years. In a future with neuromorphic computing, all

of what you can imagine – and more – moves from possibility to

reality, as the flow of intelligence and decision-making becomes

more fluid and accelerated.

Intel’s vision for developing innovative compute architectures

remains steadfast, and we know what the future of compute looks

like because we are building it today.

View source

version on businesswire.com: http://www.businesswire.com/news/home/20170925005364/en/

Intel CorporationEllen Healyellen.healy@intel.com

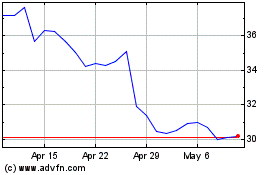

Intel (NASDAQ:INTC)

Historical Stock Chart

From Apr 2024 to May 2024

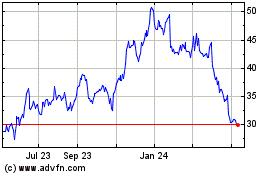

Intel (NASDAQ:INTC)

Historical Stock Chart

From May 2023 to May 2024