By Christopher Mims

During modern computing's first epoch, one trend reigned

supreme: Moore's Law.

Actually a prediction by Intel Corp. co-founder Gordon Moore

rather than any sort of physical law, Moore's Law held that the

number of transistors on a chip doubles roughly every two years. It

also meant that performance of those chips -- and the computers

they powered -- increased by a substantial amount on roughly the

same timetable. This formed the industry's core, the glowing

crucible from which sprang trillion-dollar technologies that

upended almost every aspect of our day-to-day existence.

As chip makers have reached the limits of atomic-scale circuitry

and the physics of electrons, Moore's law has slowed, and some say

it's over. But a different law, potentially no less consequential

for computing's next half century, has arisen.

I call it Huang's Law, after Nvidia Corp. chief executive and

co-founder Jensen Huang. It describes how the silicon chips that

power artificial intelligence more than double in performance every

two years. While the increase can be attributed to both hardware

and software, its steady progress makes it a unique enabler of

everything from autonomous cars, trucks and ships to the face,

voice and object recognition in our personal gadgets.

Between November 2012 and this May, performance of Nvidia's

chips increased 317 times for an important class of AI

calculations, says Bill Dally, chief scientist and senior vice

president of research at Nvidia. On average, in other words, the

performance of these chips more than doubled every year, a rate of

progress that makes Moore's Law pale in comparison.

Nvidia's specialty has long been graphics processing units, or

GPUs, which operate efficiently when there are many independent

tasks to be done simultaneously. Central processing units, or CPUs,

like the kind that Intel specializes in, are on the other hand much

less efficient but better at executing a single, serial task very

quickly. You can't chop up every computing process so that it can

be efficiently handled by a GPU, but for the ones you can --

including many AI applications -- you can perform it many times as

fast while expending the same power.

Intel was a primary driver of Moore's Law, but it was hardly the

only one. Perpetuating it required tens of thousands of engineers

and billions of dollars in investment across hundreds of companies

around the globe. Similarly, Nvidia isn't alone in driving Huang's

Law -- and in fact its own type of AI processing might, in some

applications, be losing its appeal. That's probably a major reason

it has moved to acquire chip architect Arm Holdings this month,

another company key to ongoing improvement in the speed of AI, for

$40 billion.

The pace of improvement in AI-specific hardware will make

possible a range of applications both utopian and dystopian, from

the end of automobile accidents to ubiquitous surveillance. But

it's also enabling, right now, a less fantastical application with

huge implications for how we shop and the fate of millions of

retail jobs: cashierless checkout.

San Francisco-based tech company Standard recently announced a

deal with Circle K to turn some of its stores into "grab and go"

experiences in the mold of Amazon.com Inc.'s Amazon Go stores. The

three-year-old startup installs cameras throughout stores, then

routes video from them to Nvidia-powered systems in the back, which

perform tens of trillions of calculations a second. As shoppers

grab objects off store shelves, the system tallies it all, and

bills them through their mobile devices as they walk out.

For perspective, a system performing this many operations a

second is faster than the most powerful supercomputer in the world

was as recently as 2012, at least at AI inference tasks.

"Honestly we could do nothing and just wait and Nvidia will drop

our prices every year," says Jordan Fisher, Standard's founder and

CEO.

Another category that Huang's Law affects is autonomous

vehicles. At San Diego-based TuSimple, a rapidly expanding

autonomous-trucking startup, the challenge is making a self-driving

system that can fit the power and space limitations of a

diesel-powered semi-trailer truck. On a typical TuSimple vehicle,

that means cramming the entire system, which can't draw more than 5

kilowatts, into an air-cooled cabinet in the sleeper cab.

Given such power constraints, what matters most is performance

per watt. TuSimple is seeing performance double every year on its

Nvidia-powered systems, says Xiaodi Hou, the company's co-founder

and chief technology officer.

Similar boosts in performance have been occurring since the

mid-2000s in a very different area of AI: our mobile phones.

In 2017, Apple introduced the iPhone 8, which included its

Neural Engine. Apple designed the chip specifically to run

machine-learning tasks, which are important to many kinds of AI.

(Its chip-manufacturing partner is Taiwan Semiconductor

Manufacturing Co.)

Apple's decision to make the chip accessible to any app on the

phone -- as well as the introduction of comparable chips and

software on Android phones -- allowed for new kinds of AI

businesses, says Bruno Fernandez-Ruiz, co-founder and chief

technology officer of Nexar, a company that makes AI-powered

dashboard cameras for cars. By processing on users' phones streams

of video captured by dashboard cameras, Nexar's technology can

alert drivers to imminent hazards.

Uses of mobile AI are multiplying, in phones and smart devices

ranging from dishwashers to door locks to lightbulbs, as well as

the millions of sensors making their way to cities, factories and

industrial facilities. And chip designer Arm Holdings -- whose

patents Apple, among many tech companies large and small, licenses

for its iPhone chips -- is at the center of this revolution.

Over the last three to five years, machine-learning networks

have been increasing by orders of magnitude in efficiency, says

Dennis Laudick, vice president of marketing in Arm's

machine-learning group. "Now it's more about making things work in

a smaller and smaller environment," he adds. Arm's smallest and

most energy-sipping chips, tiny enough to be powered by a watch

battery, can now enable cameras to recognize objects in real

time.

This movement of AI processing from the cloud to the "edge" --

that is, on the devices themselves -- explains Nvidia's desire to

buy Arm, says Nexar co-founder and CEO Eran Shir. Nvidia has a near

monopoly on AI processing in the cloud. But where two years ago,

Nexar performed 40% of its data processing in the cloud, Arm-based

chips have enabled it to do much more of that processing in mobile

devices, and faster, since it doesn't have to be transmitted over

the internet first. Today, the cloud is doing only 15% of the work.

In addition, some functions, like a vision-based parking assistant,

were not even possible until recently, when the chips in phones

became much more capable.

Experts agree that the phenomenon I've labeled Huang's Law is

advancing at a blistering pace. However, its exact cadence can be

difficult to nail down. The nonprofit Open AI says that, based on a

classic AI image-recognition test, performance doubles roughly

every year and a half. But it's been a challenge even agreeing on

the definition of "performance." A consortium of researchers from

Google, Baidu, Harvard, Stanford and practically every other major

tech company are collaborating on an effort to better and more

objectively measure it.

Another caveat for Huang's Law is that it describes processing

power that can't be thrown at every application. Even in a

stereotypically AI-centric task like autonomous driving, most of

the code the system is running requires the CPU, says TuSimple's

Mr. Hou. Dr. Dally of Nvidia acknowledges this problem, and says

that when engineers radically speed up one part of a calculation,

whatever remains that can't be sped up naturally becomes the

bottleneck.

It's also possible that, like Moore's Law before it, Huang's Law

will run out of steam. That could happen within a decade, says

Steve Roddy, vice president of product marketing in Arm's

machine-learning group. But it could enable much in that relatively

short time, from driverless cars to factories and homes that sense

and respond to their environments.

(END) Dow Jones Newswires

September 19, 2020 00:14 ET (04:14 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

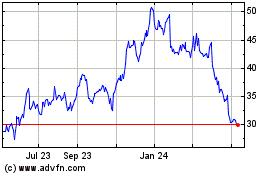

Intel (NASDAQ:INTC)

Historical Stock Chart

From Aug 2024 to Sep 2024

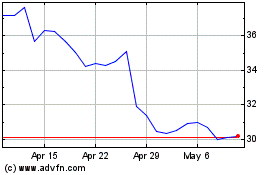

Intel (NASDAQ:INTC)

Historical Stock Chart

From Sep 2023 to Sep 2024