By Jeff Horwitz and Deepa Seetharaman

A Facebook Inc. team had a blunt message for senior executives.

The company's algorithms weren't bringing people together. They

were driving people apart.

"Our algorithms exploit the human brain's attraction to

divisiveness," read a slide from a 2018 presentation. "If left

unchecked," it warned, Facebook would feed users "more and more

divisive content in an effort to gain user attention & increase

time on the platform."

That presentation went to the heart of a question dogging

Facebook almost since its founding: Does its platform aggravate

polarization and tribal behavior?

The answer it found, in some cases, was yes.

Facebook had kicked off an internal effort to understand how its

platform shaped user behavior and how the company might address

potential harms. Chief Executive Mark Zuckerberg had in public and

private expressed concern about "sensationalism and

polarization."

But in the end, Facebook's interest was fleeting. Mr. Zuckerberg

and other senior executives largely shelved the basic research,

according to previously unreported internal documents and people

familiar with the effort, and weakened or blocked efforts to apply

its conclusions to Facebook products.

Facebook policy chief Joel Kaplan, who played a central role in

vetting proposed changes, argued at the time that efforts to make

conversations on the platform more civil were "paternalistic," said

people familiar with his comments.

Another concern, they and others said, was that some proposed

changes would have disproportionately affected conservative users

and publishers, at a time when the company faced accusations from

the right of political bias.

Facebook revealed few details about the effort and has divulged

little about what became of it. In 2020, the questions the effort

sought to address are even more acute, as a charged presidential

election looms and Facebook has been a conduit for conspiracy

theories and partisan sparring about the coronavirus pandemic.

In essence, Facebook is under fire for making the world more

divided. Many of its own experts appeared to agree -- and to

believe Facebook could mitigate many of the problems. The company

chose not to.

Mr. Kaplan in a recent interview said he and other executives

had approved certain changes meant to improve civic discussion. In

other cases where proposals were blocked, he said, he was trying to

"instill some discipline, rigor and responsibility into the

process" as he vetted the effectiveness and potential unintended

consequences of changes to how the platform operated.

Internally, the vetting process earned a nickname: "Eat Your

Veggies."

Americans were drifting apart on fundamental societal issues

well before the creation of social media, decades of Pew Research

Center surveys have shown. But 60% of Americans think the country's

biggest tech companies are helping further divide the country,

while only 11% believe they are uniting it, according to a

Gallup-Knight survey in March.

At Facebook, "There was this soul-searching period after 2016

that seemed to me this period of really sincere, 'Oh man, what if

we really did mess up the world?' " said Eli Pariser, co-director

of Civic Signals, a project that aims to build healthier digital

spaces, and who has spoken to Facebook officials about

polarization.

Mr. Pariser said that started to change after March 2018, when

Facebook got in hot water after disclosing that Cambridge

Analytica, the political-analytics startup, improperly obtained

Facebook data about tens of millions of people. The shift has

gained momentum since, he said: "The internal pendulum swung really

hard to 'the media hates us no matter what we do, so let's just

batten down the hatches.' "

In a sign of how far the company has moved, Mr. Zuckerberg in

January said he would stand up "against those who say that new

types of communities forming on social media are dividing us."

People who have heard him speak privately said he argues social

media bears little responsibility for polarization.

He argues the platform is in fact a guardian of free speech,

even when the content is objectionable -- a position that drove

Facebook's decision not to fact-check political advertising ahead

of the 2020 election.

'Integrity Teams'

Facebook launched its research on divisive content and behavior

at a moment when it was grappling with whether its mission to

"connect the world" was good for society.

Fixing the polarization problem would be difficult, requiring

Facebook to rethink some of its core products. Most notably, the

project forced Facebook to consider how it prioritized "user

engagement" -- a metric involving time spent, likes, shares and

comments that for years had been the lodestar of its system.

Championed by Chris Cox, Facebook's chief product officer at the

time and a top deputy to Mr. Zuckerberg, the work was carried out

over much of 2017 and 2018 by engineers and researchers assigned to

a cross-jurisdictional task force dubbed "Common Ground" and

employees in newly created "Integrity Teams" embedded around the

company.

Even before the teams' 2017 creation, Facebook researchers had

found signs of trouble. A 2016 presentation that names as author a

Facebook researcher and sociologist, Monica Lee, found extremist

content thriving in more than one-third of large German political

groups on the platform. Swamped with racist, conspiracy-minded and

pro-Russian content, the groups were disproportionately influenced

by a subset of hyperactive users, the presentation notes. Most of

them were private or secret.

The high number of extremist groups was concerning, the

presentation says. Worse was Facebook's realization that its

algorithms were responsible for their growth. The 2016 presentation

states that "64% of all extremist group joins are due to our

recommendation tools" and that most of the activity came from the

platform's "Groups You Should Join" and "Discover" algorithms: "Our

recommendation systems grow the problem."

Ms. Lee, who remains at Facebook, didn't respond to inquiries.

Facebook declined to respond to questions about how it addressed

the problem in the presentation, which other employees said weren't

unique to Germany or the Groups product. In a presentation at an

international security conference in February, Mr. Zuckerberg said

the company tries not to recommend groups that break its rules or

are polarizing.

"We've learned a lot since 2016 and are not the same company

today," a Facebook spokeswoman said. "We've built a robust

integrity team, strengthened our policies and practices to limit

harmful content, and used research to understand our platform's

impact on society so we continue to improve."

The Common Ground team sought to tackle the polarization problem

directly, said people familiar with the team. Data scientists

involved with the effort found some interest groups -- often

hobby-based groups with no explicit ideological alignment --

brought people from different backgrounds together constructively.

Other groups appeared to incubate impulses to fight, spread

falsehoods or demonize a population of outsiders.

In keeping with Facebook's commitment to neutrality, the teams

decided Facebook shouldn't police people's opinions, stop conflict

on the platform, or prevent people from forming communities. The

vilification of one's opponents was the problem, according to one

internal document from the team.

"We're explicitly not going to build products that attempt to

change people's beliefs," one 2018 document states. "We're focused

on products that increase empathy, understanding, and humanization

of the 'other side.' "

Hot-button issues

One proposal sought to salvage conversations in groups derailed

by hot-button issues, according to the people familiar with the

team and internal documents. If two members of a Facebook group

devoted to parenting fought about vaccinations, the moderators

could establish a temporary subgroup to host the argument or limit

the frequency of posting on the topic to avoid a public flame

war.

Another idea, documents show, was to tweak recommendation

algorithms to suggest a wider range of Facebook groups than people

would ordinarily encounter.

Building these features and combating polarization might come at

a cost of lower engagement, the Common Ground team warned in a

mid-2018 document, describing some of its own proposals as

"antigrowth" and requiring Facebook to "take a moral stance."

Taking action would require Facebook to form partnerships with

academics and nonprofits to give credibility to changes affecting

public conversation, the document says. This was becoming difficult

as the company slogged through controversies after the 2016

presidential election.

"People don't trust us," said a presentation created in the

summer of 2018.

The engineers and data scientists on Facebook's Integrity Teams

-- chief among them, scientists who worked on newsfeed, the stream

of posts and photos that greet users when they visit Facebook --

arrived at the polarization problem indirectly, according to people

familiar with the teams. Asked to combat fake news, spam, clickbait

and inauthentic users, the employees looked for ways to diminish

the reach of such ills. One early discovery: Bad behavior came

disproportionately from a small pool of hyperpartisan users.

A second finding in the U.S. saw a larger infrastructure of

accounts and publishers on the far right than on the far left.

Outside observers were documenting the same phenomenon. The gap

meant even seemingly apolitical actions such as reducing the spread

of clickbait headlines -- along the lines of "You Won't Believe

What Happened Next" -- affected conservative speech more than

liberal content in aggregate.

That was a tough sell to Mr. Kaplan, said people who heard him

discuss Common Ground and Integrity proposals. A former deputy

chief of staff to George W. Bush, Mr. Kaplan became more involved

in content-ranking decisions after 2016 allegations Facebook had

suppressed trending news stories from conservative outlets. An

internal review didn't substantiate the claims of bias, Facebook's

then-general counsel Colin Stretch told Congress, but the damage to

Facebook's reputation among conservatives had been done.

Every significant new integrity-ranking initiative had to seek

the approval of not just engineering managers but also

representatives of the public policy, legal, marketing and

public-relations departments.

Lindsey Shepard, a former Facebook product-marketing director

who helped set up the Eat Your Veggies process, said it arose from

what she believed were reasonable concerns that overzealous

engineers might let their politics influence the platform.

"Engineers that were used to having autonomy maybe over-rotated

a bit" after the 2016 election to address Facebook's perceived

flaws, she said. The meetings helped keep that in check. "At the

end of the day, if we didn't reach consensus, we'd frame up the

different points of view, and then they'd be raised up to

Mark."

Scuttled projects

Disapproval from Mr. Kaplan's team or Facebook's communications

department could scuttle a project, said people familiar with the

effort. Negative policy-team reviews killed efforts to build a

classification system for hyperpolarized content. Likewise, the Eat

Your Veggies process shut down efforts to suppress clickbait about

politics more than on other topics.

Initiatives that survived were often weakened. Mr. Cox wooed

Carlos Gomez Uribe, former head of Netflix Inc.'s recommendation

system, to lead the newsfeed Integrity Team in January 2017. Within

a few months, Mr. Uribe began pushing to reduce the outsize impact

hyperactive users had.

Under Facebook's engagement-based metrics, a user who likes,

shares or comments on 1,500 pieces of content has more influence on

the platform and its algorithms than one who interacts with just 15

posts, allowing "super-sharers" to drown out less-active users.

Accounts with hyperactive engagement were far more partisan on

average than normal Facebook users, and they were more likely to

behave suspiciously, sometimes appearing on the platform as much as

20 hours a day and engaging in spam-like behavior. The behavior

suggested some were either people working in shifts or bots.

One proposal Mr. Uribe's team championed, called "Sparing

Sharing," would have reduced the spread of content

disproportionately favored by hyperactive users, according to

people familiar with it. Its effects would be heaviest on content

favored by users on the far right and left. Middle-of-the-road

users would gain influence.

Mr. Uribe called it "the happy face," said some of the people.

Facebook's data scientists believed it could bolster the platform's

defenses against spam and coordinated manipulation efforts of the

sort Russia undertook during the 2016 election.

Mr. Kaplan and other senior Facebook executives pushed back on

the grounds it might harm a hypothetical Girl Scout troop, said

people familiar with his comments. Suppose, Mr. Kaplan asked them,

that the girls became Facebook super-sharers to promote cookies?

Mitigating the reach of the platform's most dedicated users would

unfairly thwart them, he said.

Mr. Kaplan in the recent interview said he didn't remember

raising the Girl Scout example but was concerned about the effect

on publishers who happened to have enthusiastic followings.

The debate got kicked up to Mr. Zuckerberg, who heard out both

sides in a short meeting, said people briefed on it. His response:

Do it, but cut the weighting by 80%. Mr. Zuckerberg also signaled

he was losing interest in the effort to recalibrate the platform in

the name of social good, they said, asking that they not bring him

something like that again.

Mr. Uribe left Facebook and the tech industry within the year.

He declined to discuss his work at Facebook in detail but confirmed

his advocacy for the Sparing Sharing proposal. He said he left

Facebook because of his frustration with company executives and

their narrow focus on how integrity changes would affect American

politics. While proposals like his did disproportionately affect

conservatives in the U.S., he said, in other countries the opposite

was true.

Other projects met Sparing Sharing's fate: weakened, not killed.

Partial victories included efforts to promote news stories

garnering engagement from a broad user base, not just partisans,

and penalties for publishers that repeatedly shared false news or

directed users to ad-choked pages.

The tug of war was resolved in part by the growing furor over

the Cambridge Analytica scandal. In a September 2018 reorganization

of Facebook's newsfeed team, managers told employees the company's

priorities were shifting "away from societal good to individual

value," said people present for the discussion. If users wanted to

routinely view or post hostile content about groups they didn't

like, Facebook wouldn't suppress it if the content didn't

specifically violate the company's rules.

Mr. Cox left the company several months later after

disagreements regarding Facebook's pivot toward private encrypted

messaging. He hadn't won most fights he had engaged in on integrity

ranking and Common Ground product changes, people involved in the

effort said, and his departure left the remaining staffers working

on such projects without a high-level advocate.

The Common Ground team disbanded. The Integrity Teams still

exist, though many senior staffers left the company or headed to

Facebook's Instagram platform.

Mr. Zuckerberg announced in 2019 that Facebook would take down

content violating specific standards but where possible take a

hands-off approach to policing material not clearly violating its

standards.

"You can't impose tolerance top-down," he said in an October

speech at Georgetown University. "It has to come from people

opening up, sharing experiences, and developing a shared story for

society that we all feel we're a part of. That's how we make

progress together."

Write to Jeff Horwitz at Jeff.Horwitz@wsj.com and Deepa

Seetharaman at Deepa.Seetharaman@wsj.com

(END) Dow Jones Newswires

May 26, 2020 11:53 ET (15:53 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

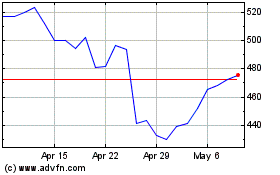

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Mar 2024 to Apr 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Apr 2023 to Apr 2024