By Christopher Mims

If you want to understand the limitations of the algorithms that

control what we see and hear -- and base many of our decisions upon

-- take a look at Facebook Inc.'s experimental remedy for revenge

porn.

To stop an ex from sharing nude pictures of you, you have to

share nudes with Facebook itself. Not uncomfortable enough?

Facebook also says a real live human will have to check them

out.

Without that human review, it would be too easy to exploit

Facebook's antirevenge-porn service to take down legitimate images.

Artificial intelligence, it turns out, has a hard time telling the

difference between your naked body and a nude by Titian.

The internet giants that tout their AI bona fides have tried to

make their algorithms as human-free as possible, and that's been a

problem. It has become increasingly apparent over the past year

that building systems without humans "in the loop" -- especially in

the case of Facebook and the ads it linked to 470 "inauthentic"

Russian-backed accounts -- can lead to disastrous outcomes, as

actual human brains figure out how to exploit them.

Whether it's winning at games like Go or keeping watch for

Russian influence operations, the best AI-powered systems require

humans to play an active role in their creation, tending and

operation. Far from displacing workers, this combination is

spawning new nonengineer jobs every day, and the preponderance of

evidence suggests the boom will continue for the foreseeable

future.

Facebook, of course, is now a prime example of this trend. The

company recently announced it would add 10,000 content moderators

to the 10,000 it already employs -- a hiring surge that will impact

its future profitability, said Chief Executive Mark Zuckerberg.

And Facebook is hardly alone. Alphabet Inc.'s Google has long

employed humans alongside AI to eliminate ads that violate its

terms of service, ferret out fake news and take down extremist

YouTube videos. Google doesn't disclose how many people are looped

into its content moderation, search optimization and other

algorithms, but a company spokeswoman says the figure is in the

thousands -- and growing.

Twitter has its own teams to moderate content, though the

company is largely silent about how it accomplishes this, other

than touting its system's ability to automatically delete 95% of

terrorists' accounts.

Almost every big company using AI to automate processes has a

need for humans as a part of that AI, says Panos Ipeirotis, a

professor at New York University's Stern School of Business.

America's five largest financial institutions employ teams of

nonengineers as part of their AI systems, says Dr. Ipeirotis, who

consults with banks.

AI's constant hunger for human brains is based on our increasing

demand for services. The more we ask for, the less likely a

computer algorithm can go it alone -- while the combination can be

more effective and efficient. For example, bank workers who

previously read every email in search of fraud now make better use

of their time investigating emails the AI flags as suspicious, says

Dr. Ipeirotis.

What AI Can (and Can't) Do

A machine-learning-based AI system is a piece of software that

learns, almost like a primitive insect. That means that it can't be

programmed -- it must be taught.

To teach them, humans feed these systems examples, and they need

truckloads. To build an AI filter to identify extremist content on

YouTube, humans at Google manually reviewed over a million videos

to flag qualifying examples, says a Google spokeswoman.

An algorithm can only be as good as "the quantity and quality of

the training data to get [it] going," says Robin Bordoli, CEO of

CrowdFlower Inc., which provides human labor to companies that need

people to train and maintain AI algorithms, from auto makers to

internet giants to financial institutions.

Even when an AI has been trained, its judgment is never perfect.

Human oversight is still needed, especially with material in which

context matters, such as those extremist YouTube posts. While AI

can take down 83% before a single human flags them, says Google,

the remaining 17% needs humans. But this serves as further

training: This data can then be fed back into the algorithm to

improve it.

Relying on AI can lead to false positives, as when the company

pulls down legitimate content that its algorithms think might be

offensive.

There are many cases when AI can barely perform a task at all,

as in the case of Facebook's nude pic filter. Transcribing receipts

and business cards, tagging videos and moderating adult content are

all tasks that "should be easy for machine learning, but in

practice are too unstructured," says Vili Lehdonvirta, a senior

research fellow at the Oxford Internet Institute in the United

Kingdom.

Dr. Lehdonvirta maintains the Online Labor Index, a real-time

estimate of the number of people employed for these sorts of tasks.

By his calculations, the number of tasks posted to crowdsourced

online labor platforms, which includes this kind of work, is up 40%

in the past year alone.

Systems at risk of being gamed by fraudsters also require

constant human attention, says Dr. Ipeirotis. AIs, once trained,

are inexhaustible, but this is a curse as much as a blessing:

People who outsmart the algorithm can multiply their results a

millionfold.

Humans, on the other hand, are slower than AI, but can identify

patterns based on very little information. Any time a system must

deal with bad actors -- like when an entity posing as an American

on Twitter is actually a Russian agent -- there is no replacement

for live staffers.

A Growing Workforce

Some of this labor happens through outsourced systems like

CrowdFlower and Mechanical Turk, Amazon.com Inc.'s system for

outsourcing individual computer microtasks to a global workforce of

more than 500,000 people.

Across the globe, between 10,000 and 20,000 people a week pick

up online piecework, flagging porn in online forums, teaching

self-driving systems to identify pedestrians and training

facial-recognition algorithms, Dr. Lehdonvirta estimates. When you

include companies' own internal teams, there are probably hundreds

of thousands of humans, world-wide, whose work is sold as AI, he

says.

Dr. Lehdonvirta's estimates don't include the world's biggest

human-AI workforce: China's censors. Estimates for the Chinese

government's operation alone range from 100,000 to one million. In

addition, every Chinese internet company that distributes or hosts

content must have its own censors, typically one per 100,000

users.

Facebook isn't in China. One China internet executive told The

Wall Street Journal that if it were, it would need 20,000 content

moderators -- for video alone.

(END) Dow Jones Newswires

November 12, 2017 07:14 ET (12:14 GMT)

Copyright (c) 2017 Dow Jones & Company, Inc.

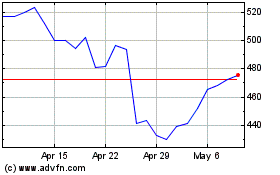

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Mar 2024 to Apr 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Apr 2023 to Apr 2024