By Asa Fitch

Artificial intelligence has mastered some of the world's most

complex games, beating top players at chess, Go and even StarCraft

II, a real-time strategy computer game. But where AI stumbles is in

cracking some of the seemingly simple games, the ones that require

an ability to communicate and collaborate.

That could be about to change. Researchers at Alphabet Inc.'s

Google Brain project and DeepMind unit, which cooked up the AI

programs that defeated humans at Go and StarCraft II, have set

their sights on a new game: Hanabi, a card game in which players

cooperate with one another. Everyone either wins or loses based on

how well they communicate through their play.

If the researchers can figure out how to create a Hanabi master,

that could represent a significant advance for AI, possibly

yielding insights into how machines can interact more fluidly with

humans in contexts such as conversation and autonomous driving, the

researchers involved in this project say. "In everyday life we

don't spend time competing with each other -- we spend time

communicating and cooperating," says Jakob Foerster, a University

of Oxford researcher and a lead author of a paper published in

February announcing the challenge. "Hanabi as a game is all about

communicating and cooperating, and that hasn't been explored" in

the context of AI and games, he says.

Understanding hints

Invented in 2010, Hanabi is played by two to five people who

work together to play cards of five different colors in the right

order. The twist: While all players can see each other's hands,

they can't see their own. To bridge that information gap, players

are allowed to give each other hints about their hands, but they're

limited to naming colors or numbers, leaving players to infer what

cards they should play. They also can only make a limited number of

hints in a given game.

It is that act of communicating efficiently that makes Hanabi

scientifically intriguing. Humans, for example, may naturally

interpret a co-player's hint as an indication that the named card

is playable. But machines won't intrinsically understand such

cues.

"These cooperative games are different and hard because you need

to be able to agree on a way that you're communicating with each

other in order to play the game well, and doing that agreement

requires collaboration among all the players," says Nolan Bard, a

DeepMind researcher working on the challenge and a lead author of

the February paper.

Efforts so far have crafted Hanabi-playing programs that can win

a lot of the time -- but only when they play with other similarly

programmed bots. Having teammates whose playing styles aren't

previously known, or what DeepMind researchers call "ad hoc" play,

is where the researchers see the greatest challenge and the richest

opportunity to find real-world applications

Assumptions and inferences

According to the researchers, humans constantly construct a

"theory of mind" about other people, making the assumption that

others think and act like us and predicting their actions with that

in mind. When someone stands on the street corner, for instance,

drivers assume she is thinking about crossing the road.

Embedding such theories of mind in machines, the researchers

suggest, could improve how autonomous vehicles act when they

encounter new situations, allowing them to grasp what people's

actions mean they're likely trying to do. Robots could be taught,

for example, to understand contextual cues in speech to allow them

to infer what the speaker is thinking.

To illustrate how this kind of capability is currently absent

from AI programs, Dr. Bard uses the example of a computer trained

to play rock/paper/scissors. The computer would throw rock, paper

or scissors an equal number of times and expect to win about half

of the time, Mr. Bard says. But if the human were making the same

play every time, a standard algorithm wouldn't theorize what the

human opponent was thinking and shift strategies to exploit that

tendency. The computer won't realize after 10 times that the human

is always playing rock and it should play paper, he says.

In terms of other games, AI programs for playing bridge have

progressed but still fall short of mastering the game, in part

because of the player skill at communication that is required. Many

forms of poker present a similar problem, where machines struggle

to discern what is implied by players' actions.

Jeff Wu, an engineer at OpenAI in San Francisco, developed a bot

that plays Hanabi using a strategy called "hat guessing," where

card hints are used in complex ways to signal to other players

which of their cards are playable. (The name comes from a popular

logic problem in which a group of people try to guess the colors of

hats on each of their heads.) While Mr. Wu's bot has been a big

success when it is present in all of the players of a game of

Hanabi, he says that developing a theory of mind for Hanabi-playing

bots and for games with unknown co-players is still a huge

challenge.

"With hat guessing, they [the bots] don't have theory of mind --

they have theory of myself and other copies of myself, and that's

good enough if you only play yourself," he says. "If you were to

try to write a bot that really had theory of mind and could try to

figure out what the other agent was thinking and doing, that would

be a lot more challenging."

Researchers working on the Hanabi challenge at DeepMind set up

an open-source platform where people can experiment with artificial

agents and algorithms, but they don't expect the problem's solution

to come soon. Dr. Foerster says he'd be surprised if it only took

five years to master ad hoc play.

Still, Julian Togelius, an associate professor at New York

University who studies AI and games, says games like Hanabi are

fertile ground for innovation. "Game design as it has progressed is

an ongoing mapping of human intellectual ability," he says. "If

there is a form of intelligence, then someone will at some point

design a game that exercises that intelligence."

Mr. Fitch is a reporter in the San Francisco bureau of The Wall

Street Journal. He can be reached at asa.fitch@wsj.com.

(END) Dow Jones Newswires

March 29, 2019 12:17 ET (16:17 GMT)

Copyright (c) 2019 Dow Jones & Company, Inc.

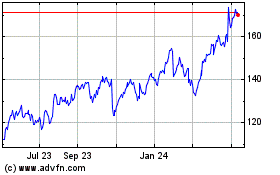

Alphabet (NASDAQ:GOOG)

Historical Stock Chart

From Aug 2024 to Sep 2024

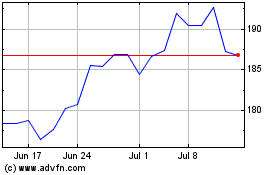

Alphabet (NASDAQ:GOOG)

Historical Stock Chart

From Sep 2023 to Sep 2024