By Georgia Wells, Shelby Holliday and Deepa Seetharaman

A decade ago, at a pro-immigration march on the steps of the

Capitol building in Little Rock, Ark., community organizer Randi

Romo saw a woman carrying a sign that read "no human being is

illegal." She took a photograph and sent it to an activist group,

which uploaded it to photo-sharing site Flickr.

Last August, the same image -- digitally altered so the sign

read "give me more free shit" -- appeared on a Facebook page,

Secured Borders, which called for the deportation of undocumented

immigrants. The image was liked or shared hundreds of times,

according to cached versions of the page.

This use of doctored images was a crucial and deceptively simple

technique used by Russian propagandists to spread fabricated

information during the 2016 election, one that exposes a loophole

in tech company defenses. Facebook Inc. and Alphabet Inc.'s Google

have traps to detect misinformation, but struggle -- then and now

-- to identify falsehoods posted directly on their platforms, in

particular through pictures.

Facebook disclosed last fall that Secured Borders was one of 290

Facebook and Instagram pages created and run by Russia-backed

accounts that sought to amplify divisive social issues, including

immigration. Last week's indictment secured by special counsel

Robert Mueller cited the Secured Borders page as an example of how

Russians invented fake personas in an effort to "sow discord in the

U.S. political system."

The campaigns conducted by some of those accounts, according to

a Wall Street Journal review, often relied on images that were

doctored or taken out of context.

Algorithms designed by big technology companies are years away

from being able to accurately interpret the content of many images

and detect indications they might have been distorted or taken out

of context. Facebook says detecting even text-based content that

violates its standards is still too difficult to automate

exclusively. Facebook and Google continue to rely heavily on users

to flag posts that contain potentially false information. On

Wednesday, for example, YouTube said it mistakenly promoted a

conspiratorial video falsely accusing a teenage witness in last

week's Florida school shooting of being an actor.

Automated systems are generally set up to suppress links to fake

news articles. Falsehoods posted directly, such as within status

updates, images and videos, escape scrutiny. Moreover, the

companies are generally reluctant to remove content that is said to

be false, to avoid refereeing the truth.

Users, meanwhile, are less likely to doubt the legitimacy of

images, making distorted pictures unusually effective weapons in

misinformation campaigns, says Claire Wardle, a research fellow and

expert in social media and user-generated content at Harvard

University's Shorenstein Center.

Last week's indictment described how a Russian organization

called the Internet Research Agency issued guidance to its workers

on ratios of texts in their posts and how to use graphics and

videos.

"I created all these pictures and posts, and the Americans

believed that it was written by their people," one of the

co-conspirators emailed a relative in September, the indictment

said.

The Russian entities often added small icons known as watermarks

to the corners of their doctored photos, which branded their

impostor social-media accounts and lent an air of authenticity to

the pictures.

"In a world where we're kind of scrolling through on these small

smartphone screens, images are incredibly powerful because we're a

lot less likely to stop and think, 'does this look real?' " said

Dr. Wardle, who also leads First Draft News, a nonprofit dedicated

to fighting digital misinformation that works with tech companies

on some projects.

Facebook is working to fix its platform and prevent further

manipulation ahead of the U.S. midterm elections in November -- an

effort Facebook leaders have described as urgent. The company,

along with Google and Twitter Inc., has been under fire from

lawmakers and other critics over the handling of Russian meddling

in the presidential election.

"It's abhorrent to us that a nation-state used our platform to

wage a cyberwar intended to divide society," Facebook executive

Samidh Chakrabarti said in a blog last month, adding that the

company should have done more to prevent it. "Now we're making up

for lost time."

Facebook is refocusing to become what it calls "video first" and

expects video will dominate its news feed within a few years, which

suggests its challenges will only intensify.

The company plans to expand its program for tracking and

suppressing links to fake news articles to include doctored images

and videos, according to a Facebook spokesman. Facebook discussed

the idea earlier this month with fact-checking groups it has been

working with to check news stories, along with plans to build more

tools to help identify when photos are taken out of context.

People tend to share images and videos more than plain text.

During three months around the U.S. presidential election, tweets

that included photos were nearly twice as likely to be retweeted

than text-only tweets, according to researchers at Syracuse

University studying how information spreads on social networks.

On April 17, University of Southern California student Tiana

Lowe spotted a racist sign hanging in front of a student housing

complex near campus. On a piece of cardboard, the words "No Black

People Allowed" appeared next to a drawing of the Confederate flag

and the hashtag #MAGA, for President Donald Trump's campaign

slogan.

Ms. Lowe snapped a photo on her iPhone. In a story that day for

the campus news site, the Tab, she questioned whether the incident

was a hoax, writing that the sign had been hung by a black neighbor

who was unaffiliated with the university following a dispute with

the housing complex's residents. USC's Department of Public Safety

said the man admitted to placing the sign. (The Tab, an independent

campus news site, is partially funded by News Corp, owner of the

Journal.)

The following day, a modified version of the photo appeared on a

popular Facebook page, Blacktivist. The image was cropped, altered

and watermarked with a Blacktivist logo, and the #MAGA hashtag was

digitally removed. Information that could be used to identify the

house, such as the phone number for the property's leasing office,

was cut out.

The Blacktivist page, which last Friday's indictment said was

controlled by Russian entities, cast the significance of the photo

in a different light. The caption next to the photo made no mention

of a hoax, instead portraying it as a racist act.

"Why racial intolerance still has a place in our country?" it

read. "Racially-charged incidents continue to happen and it must

receive national attention." The Blacktivist page had more than

300,000 followers at the time.

"It had clearly been framed and repackaged as an act of white

supremacy rather than a hate-crime hoax," says Ms. Lowe. She became

aware of the reuse of her photo two days later when a conservative

college news site, the College Fix, picked up the Blacktivist

post.

Ms. Lowe says she wrote a comment on the Blacktivist post saying

the information had been taken out of context, and she tweeted a

screenshot of the post calling Blacktivist "fake news." She didn't

file a formal complaint with Facebook and didn't learn more about

Blacktivist until Facebook revealed months later it was linked to

Russia.

Tech companies can detect exact or near-exact copies of images,

videos and audio for copyright enforcement. Spotting doctored

photos or videos is a different challenge because tracking those

changes requires keeping tabs on the original image, which isn't

always available, says Krishna Bharat, who helped create Google

News and now advises and invests in startups. Running a comparative

analysis can be expensive, and there are legitimate reasons someone

might crop, touch-up or add a new element to a photo.

Around the time last summer that Secured Borders posted Ms.

Romo's photo of the mother supposedly asking for handouts, the

group also posted a meme that suggested millions of illegal

immigrants may have voted in the 2008 election. It depicted a man

who appeared to be Hispanic holding a document, implying that he

had illegally voted.

The image originated in a newscast two years earlier on Los

Angeles television station KTLA about a state program to provide

driver's licenses to illegal immigrants. A KTLA executive said he

wasn't aware that Secured Borders had used an image from the

newscast.

When misleading content is flagged, tech companies wrestle with

what to do next. Facebook, Twitter and Alphabet's YouTube say they

only remove content that violates their standards, such as

promoting hate speech, spam or distributing child pornography.

Misinformation by itself doesn't count. Doctored images or status

updates containing falsehoods can remain up if the posts don't

otherwise violate their policies.

When Facebook in September removed the 290 Russia-backed pages

on Facebook and its photo-sharing platform Instagram, it said it

did so because the accounts misrepresented their identity, not

because of the veracity of the content.

One of the misleading photos disseminated by a Russia-backed

page has remained on social media because Instagram said it doesn't

violate its content policies.

BlackMattersUS, a Russia-backed page purporting to promote the

black community, posted a misleading photo that was reshared on

Instagram as recently as January 2017. It shows a young black boy

with overlaid text saying that, because of homicide, suicide and

incarceration, "the black male is effectively dying at the rate of

an endangered species." The BlackMattersUS account was taken down

by Instagram, but because the image was shared by other legitimate

accounts, the post remained online as of mid-February.

The meme -- a photo with text on top, which is tougher for

software to read than plain text -- includes no citation of

research or statistics. The image's claim that black adult females

greatly outnumber black adult males is false, census data

indicate.

The authentic photo was part of a 2013 series on "dambe" boxers

in northern Nigeria by Nigerian photographer August Udoh, who

wasn't aware his work was used by BlackMattersUS. "The thing is,

the message itself is not even related to the image," says Mr.

Udoh. "How do you put those two together and make propaganda out of

it? It's crazy."

Ms. Romo, the photographer of the pro-immigration march, says

she discovered her photo had been manipulated by the Russia-backed

account only when she got a call from a Journal reporter. "We are

living in the greatest era of information access," she says.

"People will watch cat videos endlessly, but they won't take a

minute to ascertain whether what they are being told is true or

not."

Write to Georgia Wells at Georgia.Wells@wsj.com and Deepa

Seetharaman at Deepa.Seetharaman@wsj.com

(END) Dow Jones Newswires

February 22, 2018 10:59 ET (15:59 GMT)

Copyright (c) 2018 Dow Jones & Company, Inc.

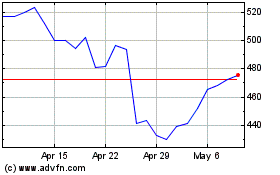

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Aug 2024 to Sep 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Sep 2023 to Sep 2024