By Lauren Weber and Deepa Seetharaman

By her second day on the job, Sarah Katz knew how jarring it can

be to work as a content moderator for Facebook Inc. She says she

saw anti-Semitic speech, bestiality photos and video of what seemed

to be a girl and boy told by an adult off-screen to have sexual

contact with each other.

Ms. Katz, 27 years old, says she reviewed as many as 8,000 posts

a day, with little training on how to handle the distress, though

she had to sign a waiver warning her about what she would

encounter. Coping mechanisms among content moderators included a

dark sense of humor and swiveling around in their chairs to

commiserate after a particularly disturbing post.

She worked at Facebook's headquarters campus in Menlo Park,

Calif., and ate for free in company cafeterias. But she wasn't a

Facebook employee. Ms. Katz was hired by a staffing company that

works for another company that in turn provides thousands of

outside workers to the social network.

Facebook employees managed the contractors, held meetings and

set policies. The outsiders did the "dirty, busy work," says Ms.

Katz, who earned $24 an hour. She left in October 2016 and now is

employed as an information-security analyst by business-software

firm ServiceNow Inc.

Deciding what does and doesn't belong online is one of the

fastest-growing jobs in the technology world -- and perhaps the

most grueling. The equivalent of 65 years of video are uploaded to

YouTube each day. Facebook receives more than a million user

reports of potentially objectionable content a day.

Humans, still, are the first line of defense. Facebook, YouTube

and other companies are racing to develop algorithms and

artificial-intelligence tools, but much of that technology is years

away from replacing people, says Eric Gilbert, a computer scientist

at the University of Michigan.

Facebook will have 7,500 content reviewers by the end of

December, up from 4,500, and it plans to double the number of

employees and contractors who handle safety and security issues to

20,000 by the end of 2018.

"I am dead serious about this," Chief Executive Mark Zuckerberg

said last month. Facebook is under pressure to improve its defenses

after failing during last year's presidential campaign to detect

that Russian operatives used its platform to influence the outcome.

Russia has denied any interference in the election.

Susan Wojcicki, YouTube's chief executive, said this month that

parent Google, part of Alphabet Inc., will expand its

content-review team to more than 10,000 in a response to anger

about videos on the site, including some that seemed to endanger

children.

No one knows how many people work as content moderators, but the

number likely totals "tens of thousands of people, easily," says

Sarah Roberts, a professor of information studies at the University

of California, Los Angeles, who studies content moderation.

Outsourcing firms such as Accenture PLC, PRO Unlimited Inc. and

Squadrun supply or manage many of the workers, who are scattered

among the corporate headquarters of clients, work from home or are

based at cubicle farms and call centers in India and the

Philippines.

The arrangement helps technology giants keep their full-time,

in-house staffing lean and flexible enough to adapt to new ideas or

changes in demand. Outsourcing firms also are considered highly

adept at providing large numbers of contractors on short

notice.

Facebook decided years ago to rely on contract workers to

enforce its policies. Executives considered the work to be

relatively low-skilled compared with, say, the work performed by

Facebook engineers, who typically hold computer-science degrees and

earn six-figure salaries, plus stock options and benefits.

Reports of offensive posts from users who see them online go

into a queue for review by moderators. The most serious categories,

including terrorism, are handled first.

Several former content moderators at Facebook say they often had

just a few seconds to decide if something violated the company's

terms of service. A company spokeswoman says reviewers don't face

specific time limits.

Pay rates for content moderators in the Bay Area range from $13

to $28 an hour, say people who have held jobs there recently.

Benefits vary widely from firm to firm. Turnover is high, with most

content moderators working anywhere from a few months to about a

year before they quit or their assignments ended, according to

interviews with more than two dozen people who have worked such

jobs.

Facebook requires that its content moderators be offered

counseling through PRO Unlimited, which actually employs many of

those workers. They can have as many as three face-to-face

counseling sessions a year arranged by an employee-assistance

program, according to an internal document reviewed by The Wall

Street Journal.

The well-being of content moderators "is something we talk about

with our team members and with our outsourcing vendors to make

clear it's not just contractual. It's really important to us," says

Mark Handel, a user research manager at Facebook who helps oversee

content moderation. "Is it enough? I don't know. But it's only

getting more important and more critical."

Former content moderators recall having to view images of war

victims who had been gutted or drowned and child soldiers engaged

in killings. One former Facebook moderator reviewed a video of a

cat being thrown into a microwave.

Workers sometimes quit on their first or second day. Some leave

for lunch and never come back. Others remain unsettled by the work

-- and what they saw as a lack of emotional support or appreciation

-- long after they quit.

Shaka Tafari worked as a contractor at messaging app Whisper in

2016 soon after it began testing a messaging feature designed for

high-school students. Mr. Tafari, 30, was alarmed by the number of

rape references in text messages he reviewed, he says, and

sometimes saw graphic photos of bestiality or people killing

dogs.

"I was watching the content of deranged psychos in the woods

somewhere who don't have a conscience for the texture or feel of

human connection, " he says.

He rarely had time to process what he was seeing because

managers remotely monitored the productivity of moderators. If the

managers noticed a few minutes of inactivity, they would ping him

on workplace messaging tool Slack to ask why he wasn't working,

says Mr. Tafari.

A Whisper spokesman says moderators were expected to communicate

with managers about breaks. Whisper no longer employs U.S.-based

moderators. It uses a team in the Philippines along with

machine-learning technology.

A former content moderator at Google says he became desensitized

to pornography after reviewing content for pornographic images all

day long. "The first disturbing thing was just burnout, like I've

literally been staring at porn all day and I can't see a human body

as anything except a possible [terms of service] violation," he

says.

He says the content moderators he worked with were hit hardest

by images of child sexual abuse. "The worst part is knowing some of

this happened to real people," he says.

Facebook says it removes abusive content, disables accounts and

reports some types of suspected criminal activity to

law-enforcement agencies, the National Center for Missing and

Exploited Children and other groups.

A spokeswoman for Facebook says it offers counseling, resiliency

training and other forms of psychological support to everyone who

reviews content.

A YouTube spokeswoman says: "We strive to work with vendors that

have a strong track record of good working conditions, and we offer

wellness resources to people who may come across upsetting content

during the course of their work."

Two Microsoft Corp. employees who reviewed content allege the

company failed to provide a safe workplace and support after it

became clear that viewing sexual abuse and other material was

traumatizing them. They say they regularly reviewed material

involving the sexual exploitation of children and adults.

In a civil lawsuit filed in a state court in Washington, the

employees said they suffered from insomnia, anxiety and

debilitating post-traumatic stress disorder. One of the Microsoft

managers, Henry Soto, said he has found it difficult at times to be

near computers or his own son.

The two employees are on medical leave from Microsoft. They are

seeking damages for past and future emotional distress,

mental-health treatment, impaired earning capacity and lost wages.

A trial in the case has been scheduled for October.

Rebecca Roe, a lawyer for the other manager, Greg Blauert, says

tech companies should be held accountable for the well-being of

content moderators. Contractors are especially at risk because they

have little job security and are less likely to seek help, she

says.

Microsoft denies it failed to provide a safe workplace. The

company says it "takes seriously its responsibility to remove and

report imagery of child sexual exploitation and abuse being shared

on its services, as well as the health and resiliency of the

employees who do this important work." Content moderators at

Microsoft are required to participate in psychological

counseling.

While Facebook and other tech companies are spending more money

than ever to police their sites, few seem inclined to convert

outside contractors into employees. Some content moderators say

they have been told by managers that data based on their decisions

would help train algorithms that might eventually make the humans

expendable.

Andrew Giese, 28, says he left his full-time job at grocery

chain Trader Joe's Co. to do content moderation for Apple Inc.,

where he spent a year mostly sifting through user comments posted

on iTunes as a contract worker.

When the contract ended, he says, Apple managers told him he

would be eligible to return after a three-month furlough, but there

were no openings available. Mr. Giese eventually went back to

Trader Joe's.

An Apple spokesman says most of its content moderators are

full-time employees, and contractors are told clearly that their

assignments are temporary.

Contracting firms still approach Mr. Giese on behalf of tech

giants that are looking for content moderators. He would say yes if

he were confident it would lead to full-time employment. "I can't

just leave a full-time job again," he says.

Write to Lauren Weber at lauren.weber@wsj.com and Deepa

Seetharaman at Deepa.Seetharaman@wsj.com

(END) Dow Jones Newswires

December 27, 2017 13:28 ET (18:28 GMT)

Copyright (c) 2017 Dow Jones & Company, Inc.

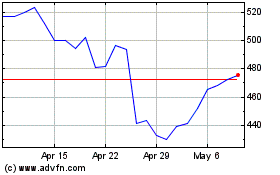

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Mar 2024 to Apr 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Apr 2023 to Apr 2024