Mark Zuckerberg's Statement on Data Use by Cambridge Analytic

March 21 2018 - 4:20PM

Dow Jones News

Text of Mark Zuckerberg's Statement

I want to share an update on the Cambridge Analytica situation

-- including the steps we've already taken and our next steps to

address this important issue.

We have a responsibility to protect your data, and if we can't

then we don't deserve to serve you. I've been working to understand

exactly what happened and how to make sure this doesn't happen

again. The good news is that the most important actions to prevent

this from happening again today we have already taken years ago.

But we also made mistakes, there's more to do, and we need to step

up and do it.

Here's a timeline of the events:

In 2007, we launched the Facebook Platform with the vision that

more apps should be social. Your calendar should be able to show

your friends' birthdays, your maps should show where your friends

live, and your address book should show their pictures. To do this,

we enabled people to log into apps and share who their friends were

and some information about them.

In 2013, a Cambridge University researcher named Aleksandr Kogan

created a personality quiz app. It was installed by around 300,000

people who shared their data as well as some of their friends'

data. Given the way our platform worked at the time this meant

Kogan was able to access tens of millions of their friends'

data.

In 2014, to prevent abusive apps, we announced that we were

changing the entire platform to dramatically limit the data apps

could access. Most importantly, apps like Kogan's could no longer

ask for data about a person's friends unless their friends had also

authorized the app. We also required developers to get approval

from us before they could request any sensitive data from people.

These actions would prevent any app like Kogan's from being able to

access so much data today.

In 2015, we learned from journalists at The Guardian that Kogan

had shared data from his app with Cambridge Analytica. It is

against our policies for developers to share data without people's

consent, so we immediately banned Kogan's app from our platform,

and demanded that Kogan and Cambridge Analytica formally certify

that they had deleted all improperly acquired data. They provided

these certifications.

Last week, we learned from The Guardian, The New York Times and

Channel 4 that Cambridge Analytica may not have deleted the data as

they had certified. We immediately banned them from using any of

our services. Cambridge Analytica claims they have already deleted

the data and has agreed to a forensic audit by a firm we hired to

confirm this. We're also working with regulators as they

investigate what happened.

This was a breach of trust between Kogan, Cambridge Analytica

and Facebook. But it was also a breach of trust between Facebook

and the people who share their data with us and expect us to

protect it. We need to fix that.

In this case, we already took the most important steps a few

years ago in 2014 to prevent bad actors from accessing people's

information in this way. But there's more we need to do and I'll

outline those steps here:

First, we will investigate all apps that had access to large

amounts of information before we changed our platform to

dramatically reduce data access in 2014, and we will conduct a full

audit of any app with suspicious activity. We will ban any

developer from our platform that does not agree to a thorough

audit. And if we find developers that misused personally

identifiable information, we will ban them and tell everyone

affected by those apps. That includes people whose data Kogan

misused here as well.

Second, we will restrict developers' data access even further to

prevent other kinds of abuse. For example, we will remove

developers' access to your data if you haven't used their app in 3

months. We will reduce the data you give an app when you sign in --

to only your name, profile photo, and email address. We'll require

developers to not only get approval but also sign a contract in

order to ask anyone for access to their posts or other private

data. And we'll have more changes to share in the next few

days.

Third, we want to make sure you understand which apps you've

allowed to access your data. In the next month, we will show

everyone a tool at the top of your News Feed with the apps you've

used and an easy way to revoke those apps' permissions to your

data. We already have a tool to do this in your privacy settings,

and now we will put this tool at the top of your News Feed to make

sure everyone sees it.

Beyond the steps we had already taken in 2014, I believe these

are the next steps we must take to continue to secure our

platform.

I started Facebook, and at the end of the day I'm responsible

for what happens on our platform. I'm serious about doing what it

takes to protect our community. While this specific issue involving

Cambridge Analytica should no longer happen with new apps today,

that doesn't change what happened in the past. We will learn from

this experience to secure our platform further and make our

community safer for everyone going forward.

I want to thank all of you who continue to believe in our

mission and work to build this community together. I know it takes

longer to fix all these issues than we'd like, but I promise you

we'll work through this and build a better service over the long

term.

(END) Dow Jones Newswires

March 21, 2018 16:05 ET (20:05 GMT)

Copyright (c) 2018 Dow Jones & Company, Inc.

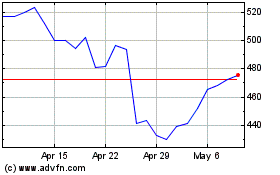

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Aug 2024 to Sep 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Sep 2023 to Sep 2024