Google Isn't Playing Games With New Chip

May 18 2016 - 3:26PM

Dow Jones News

By Robert McMillan

When Google's AlphaGo computer program bested South Korean Go

champion Lee Se-dol in March, it took advantage of a secret weapon:

a microprocessor chip specially designed by Google. The chip sped

up the Go-playing software, allowing it to plot moves in the

time-limited match and look further ahead in the game.

But the processor, built in secret over the last three years and

announced on Wednesday, plays a more strategic role in the company.

Google, a division of Alphabet Inc., has been using it for more

than a year to accelerate artificial intelligence applications as

the software techniques known as machine learning become

increasingly important to its core businesses. Overall the chip,

known as the Tensor Processing Unit, is 10 times faster than

alternatives Google considered for this work, the company said.

The company has been rumored to have been working on its own

chip designs, but Wednesday's announcement marked the first time it

confirmed such an effort.

Google isn't the only firm speeding up artificial intelligence

with new chip designs. Microsoft Corp. is using programmable chips

called Field Programmable Gate Arrays to accelerate AI

computations, including those used by its Bing search engine.

International Business Machines Corp. designed its own

brain-inspired chip called TrueNorth that is currently being tested

by Lawrence Livermore National Laboratory.

Nvidia Corp. has been pushing its chips, known as graphical

processing units, into artificial intelligence as well. GPUs are

designed to render videogame images on personal computers but have

turned out to be well suited to performing calculations used by

machine learning applications.

Google, which relies mainly on standard Intel Corp. processors

for most computing jobs, also has used Nvidia GPUs for artificial

intelligence calculations including its early tests of the AlphaGo

software.

Large companies have begun using new processor designs to

augment general-purpose processors as the pace of improvement in

that field has slowed, said Mark Horowitz, a professor of

electrical engineering at Stanford University.

"They're not doing this to replace the Intel processors," he

said. "These are addendums to these processors."

Google and Apple Inc. lately have been aggressively hiring chip

designers and engineers, Mr. Horowitz said. Apple launched its own

chip-making effort around 2009 to improve the power and efficiency

of its devices and develop new features.

Google believes its new chip will give it a seven-year advantage

-- roughly three processor generations -- over currently available

processors when it comes to machine learning. That is important

because Google is betting its future on such software. It uses

machine learning in more than 100 programs for applications

including search, voice recognition, and self-driving cars. Such

programs require intensive calculation, and supplying the

processing power and electricity to do this math quickly is

expensive.

"In order to make them feasible to roll out, economically, with

the required latency for users and all that stuff, we looked around

at the existing alternative and we decided that we needed to do our

own custom accelerators," said Norman Jouppi, a distinguished

hardware engineer at Google.

It is unclear how much of Google's overall computation runs on

its new processors. Mr. Jouppi said Google uses more than 1,000 of

the chips, but he wouldn't say whether that meant the company was

buying fewer processors from vendors such as Intel or Nvidia.

"We're still buying literally tons of CPUs and GPUs," he said.

"Whether it's a ton less than we would have otherwise, I can't

say."

Google began using the Tensor Processing Unit in April 2015 to

speed up its StreetView service's reading of street signs. It

allows the company to process all the text stored in its massive

collection of StreetView images -- things such as street signs and

address numbers attached to the sides of houses -- in just five

days, much faster than previous methods, Mr. Jouppi said.

The chip also is used in Google search ranking, photo

processing, speech recognition, and language translation. The

company plans to make the chips available as part of its Google

Cloud Platform computing-on-demand service, he said.

TPU chips are soldered onto cards that slide into the disk-drive

slots in Google's standard servers, where they handle the

specialized calculations required by Google's machine learning

software.

--Don Clark contributed to this article

Write to Robert McMillan at Robert.Mcmillan@wsj.com

(END) Dow Jones Newswires

May 18, 2016 15:11 ET (19:11 GMT)

Copyright (c) 2016 Dow Jones & Company, Inc.

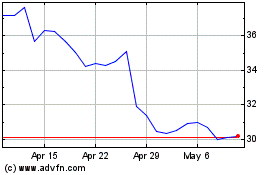

Intel (NASDAQ:INTC)

Historical Stock Chart

From Aug 2024 to Sep 2024

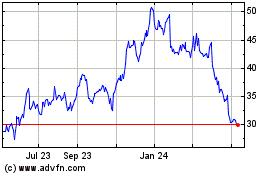

Intel (NASDAQ:INTC)

Historical Stock Chart

From Sep 2023 to Sep 2024