By Kristin Broughton

After facing public backlash in 2018 for doing business with

U.S. immigration authorities amid the separation of migrant

families at the southern U.S. border, Salesforce.com Inc., a

company known for speaking up on social issues, hired a resident

ethicist.

Paula Goldman joined the business software company early last

year as chief ethical and humane use officer, a new role tasked

with developing a framework for making decisions on complicated

political issues.

Although the company's contract with U.S. Customs and Border

Protection remains in place, Salesforce has tackled other

controversial issues. In her first year on the job, Ms. Goldman

supervised the development of a corporate policy that prohibits

customers from using Salesforce's software to sell military-style

firearms to private citizens.

She also is responsible for ensuring Salesforce's products are

developed with ethics in mind, particularly those involving

artificial intelligence. One way she has done that is by

introducing a process known as "consequence scanning," an exercise

that requires employees to document the potential unintended

outcomes of releasing a new function, she said.

"We're in this moment of correction where it's like, 'Oh yeah,

this is our responsibility to integrate this question into the way

we do business,'" Ms. Goldman said.

Before joining the San Francisco-based company, Ms. Goldman, who

was trained as an anthropologist, was a senior leader at Omidyar

Network, the social-impact investing firm founded by eBay Inc.

founder Pierre Omidyar. She also was a member of Salesforce's

ethical use advisory council, a group made up of employees and

external technology experts.

Ms. Goldman, who reports to Salesforce Chief Equality Officer

Tony Prophet, spoke with Risk & Compliance Journal about her

first year on the job. Here are edited and condensed excerpts from

the conversation.

WSJ: The restrictions that Salesforce placed on firearms -- does

your office facilitate those conversations internally?

Yes, we do. How it works is, the council plays a really

important role. We set up these processes for [employees] to raise

flags. And then obviously we're also proactively looking at, what

are the biggest risk areas? What types of policies put us on the

best footing? When an issue is prioritized, we run through

exhaustive analysis.

WSJ: Is that a financial analysis?

No. It's an ethical analysis. So it's: "What's the issue? What

role are we playing or not playing? How does it stack up against

our principles? What are the different perspectives on this issue

in civil society? What's the regulatory landscape?" All of that

stuff. And then ultimately [we come up with] a recommendation.

Different subcommittees of the council are tasked with different

topics, but we'll take it to a subcommittee of the council. They

will vote. That vote is advisory, and then it goes up to

leadership.

WSJ: How was the firearms issue raised? Was it flagged

internally?

We are always monitoring any number of issues that might cross

our desk. In this case, an employee did raise the question. There

were a number of internal discussions about how our product is

getting used. What is our role in this issue? And a number of

internal consultations with different teams, along with all of the

analysis.

WSJ: Is it fair to describe one of your responsibilities as

managing the reputation of the company?

No, I wouldn't say that. I think that's one of the things that

sets apart an effort like this. We're charged with taking an

ethical lens to our actions, and that's what leads the analysis.

Sure, are there reputational consequences to the actions we take?

Yeah, of course there are. But is that driving our action? No.

WSJ: Has your work on product design influenced the decisions

the company has made on what to launch?

Definitely. And I think we're working on what a more cohesive

summary of what that is, but it manifests in a couple of different

ways. I'll give you an example.

Within our AI products, we have a feature called protected

fields. Let's say you're building an algorithm and your training

data has a category for race. And you're saying, "Well, I don't

know if I want race in this algorithm because it might bias the

results." Using the protected fields feature, you can protect it so

that it's not included in the calculation.

But what's interesting about this feature is that it will say,

"Hey, I see you protected race. I see that you also have ZIP Codes,

and ZIP Codes can be a proxy for race. Do you want to include this

field?"

WSJ: What's on the agenda for your second year?

A couple things. One is doubling down on the product side of the

equation and really formalizing the goals with key buy-in across

product leadership. And formalizing risk frameworks. We would be

able to say, the same way that a security team would say, "I see a

security risk. It's level one, two, three or four."

Number two, on the policy side of things, is continuing to add

rigor to the process. And what does that mean? It means ever

increasing the circle of stakeholders that we talk to within civil

society.

Write to Kristin Broughton at Kristin.Broughton@wsj.com

(END) Dow Jones Newswires

February 17, 2020 11:14 ET (16:14 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

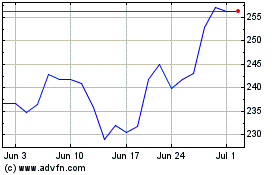

Salesforce (NYSE:CRM)

Historical Stock Chart

From Aug 2024 to Sep 2024

Salesforce (NYSE:CRM)

Historical Stock Chart

From Sep 2023 to Sep 2024