Amazon EBS io2 Block Express volumes deliver

the first storage area network (SAN) built for the cloud, with up

to 256,000 IOPS, 4,000 MB/second throughput, and 64 TB of

capacity

Next-generation Amazon EBS Gp3 volumes give

customers the ability to provision additional IOPS and throughput

performance independent of storage capacity, provide up to 4x peak

throughput, and are priced 20% lower per GB than previous

generation volumes

Amazon S3 Intelligent-Tiering adds S3 Glacier

Archive and Deep Archive access to existing Frequent and Infrequent

access tiers to automatically reduce customers’ storage costs by up

to 95% for objects that are rarely accessed

Amazon S3 Replication (multi-destination)

provides the ability to replicate data to multiple S3 buckets

simultaneously in the same AWS Region or any number of AWS Regions

to meet customers’ global content distribution, storage compliance,

and data-sharing needs

Today at AWS re:Invent, Amazon Web Services, Inc. (AWS), an

Amazon.com company (NASDAQ: AMZN), announced four storage

innovations that deliver added storage performance, resiliency, and

value to customers, including:

- Amazon EBS io2 Block Express volumes: Next-generation

storage server architecture delivers the first SAN built for the

cloud, with up to 256,000 IOPS, 4,000 MB/second throughput, and 64

TB of capacity (a 4x increase across all metrics compared to

standard io2 volumes), to meet the performance requirements of the

most I/O intensive business critical applications (available in

preview).

- Amazon EBS Gp3 volumes: Next-generation general purpose

SSD volumes for Amazon EBS give customers the flexibility to

provision additional IOPS and throughput without needing to add

additional storage, while also offering higher baseline performance

of 3,000 IOPS and 125 MB/second of throughput with the ability to

provision up to 16,000 IOPS and 1,000 MB/second peak throughput (a

4x increase over Gp2 volumes) at a 20% lower price per GB of

storage than existing Gp2 volumes (available today).

- Amazon S3 Intelligent-Tiering automatic data archiving:

Two new tiers (Archive Access and Deep Archive Access) help

customers further reduce their storage costs by up to 95% for

objects rarely accessed by automatically moving unused objects into

archive access tiers (available today).

- Amazon S3 Replication (multi-destination): New

capability gives customers the ability to replicate data to

multiple S3 buckets in the same or different AWS Regions, in order

to better manage content distribution, compliance, and data-sharing

needs across Regions (available today).

EBS io2 Block Express volumes deliver the first SAN built for

the cloud Customers choose io2 volumes (the latest

generation-provisioned IOPS volumes) to run their critical,

performance-intensive applications like SAP HANA, Microsoft SQL

Server, IBM DB2, MySQL, PostgresSQL, and Oracle databases because

it provides 99.999% (five 9s) of durability and 4x more IOPS than

general purpose EBS volumes. Some applications require higher IOPS,

throughput, or capacity than offered by a single io2 volume. To

address the needed performance, customers often stripe multiple io2

volumes together. However, the most demanding applications require

more io2 volumes to be striped together than customers want to

manage. For these highly demanding applications, many customers

have historically used on-premises SANs (a set of disks accessed

over the local network). However, SANs have numerous drawbacks.

They are expensive due to high upfront acquisition costs, require

complex forecasting to ensure sufficient capacity, are complicated

and hard to manage, and consume valuable data center space and

networking capacity. When a customer exceeds the capacity of a SAN,

they have to buy another entire SAN, which is expensive and forces

customers to pay for unused capacity. Customers told us they wanted

the power of a SAN, but in the cloud, which hasn’t existed – until

now.

EBS Block Express is a completely new storage architecture that

gives customers the first SAN built for the cloud. EBS Block

Express is designed for the largest, most I/O intensive

mission-critical deployments of Oracle, SAP HANA, Microsoft SQL

Server, and SAS Analytics that benefit from high-volume IOPS, high

throughput, high durability, high storage capacity, and low

latency. With io2 volumes running on Block Express, a single io2

volume can now be provisioned with up to 256,000 IOPS, drive up to

4,000 MB/second of throughput, and offer 64 TB of capacity – a 4x

increase over existing io2 volumes across all parameters.

Additionally, with io2 Block Express volumes, customers can achieve

consistent sub-millisecond latency for their latency-sensitive

applications. Customers can also stripe multiple io2 Block Express

volumes together to get even better performance than a single

volume can provide. Block Express helps io2 volumes achieve this

performance by completely reinventing the underlying EBS hardware,

software, and networking stacks. By decoupling the compute from the

storage at the hardware layer and rewriting the software to take

advantage of this decoupling, EBS Block Express enables new levels

of performance and reduces time to innovation. By also rewriting

the networking stack to take advantage of the high-performance

Scalable Reliable Datagrams (SRD) networking protocol, Block

Express dramatically reduces latency. These improvements are

available to customers with no upfront commitments to use io2 Block

Express volumes, and customers can provision and scale capacity

without the upfront costs of a SAN.

In the coming months, additional SAN features will be added to

Block Express volumes. These include multi-attach with I/O fencing

to give customers the ability to safely attach multiple instances

to a single volume at the same time, Fast Snapshot Restore, and

Elastic Volumes to increase EBS volume size, type, and performance.

To learn more about io2 volumes powered by Block Express visit:

https://aws.amazon.com/ebs

EBS Gp3 volumes decouple IOPS from storage capacity, deliver

more performance, and are priced 20% lower than previous generation

volumes Customers use EBS volumes to support a broad range of

workloads, such as relational and non-relational databases (e.g.

Microsoft SQL Server and Oracle), enterprise applications,

containerized applications, big data analytics engines, distributed

file systems, virtual desktops, dev/test environments, and media

workflows. Gp2 volumes have made it easy and cost effective for

customers to meet their IOPS and throughput requirements for many

of these workloads, but some applications require more IOPS than a

single Gp2 volume can deliver. With Gp2 volumes, performance scales

up with storage capacity, so customers can get higher IOPS and

throughput for their applications by provisioning a larger storage

volume size. However, some applications require higher performance,

but do not need higher storage capacity (e.g. databases like MySQL

and Cassandra). These customers can end up paying for more storage

than they need to get the required IOPS performance. Customers who

are running these workloads want to meet their performance needs

without having to provision and pay for a larger storage

volume.

Next-generation Gp3 volumes give customers the ability to

independently provision IOPS and throughput separately from storage

capacity. For workloads where the application needs more

performance, customers can modify the Gp3 volumes to provision the

IOPS and throughput they need, without having to add more storage

capacity. Gp3 volumes deliver sustained baseline performance of

3,000 IOPS and 125 MB/second with the ability to provision up to

16,000 IOPS and 1,000 MB/second peak throughput (a 4x increase over

Gp2 volumes). In addition to saving customers money by allowing

them to scale IOPS independent of storage, Gp3 volumes are also

priced 20% lower per GB than existing Gp2 volumes. Customers can

easily migrate Gp2 volumes to Gp3 volumes using Elastic Volumes, an

existing feature of EBS that allows customers to modify the volume

type, IOPS, storage capacity, and throughput of their existing EBS

volumes without interrupting their Amazon Elastic Compute Cloud

(EC2) instances. Customers can also easily create new Gp3 volumes

and scale performance using the AWS Management Console, the AWS

Command Line Interface (CLI), or the AWS SDK. To get started with

Gp3 volumes visit: https://aws.amazon.com/ebs/

Amazon S3 Intelligent-Tiering adds two new archive tiers that

deliver up to 95% storage cost savings The S3

Intelligent-Tiering storage class automatically optimizes

customers’ storage costs for data with unknown or changing access

patterns. It is the first and only cloud storage solution to

provide dynamic pricing automatically based on the changing access

patterns of individual objects in storage. S3 Intelligent-Tiering

has been widely adopted by customers with data sets that have

varying access patterns (e.g. data lakes) or unknown storage access

patterns (e.g. newly-launched applications). S3 Intelligent-Tiering

charges for storage in two pricing tiers: one tier for frequent

access (for real-time data querying) and a cost-optimized tier for

infrequent access (for batch querying). However, many AWS customers

have storage that they very rarely access and use S3 Glacier or S3

Glacier Deep Archive to reduce their storage costs for this

archived data. Prior to today, customers needed to manually build

their own applications to monitor and record access to individual

objects, when determining which objects were rarely accessed and

needed to be moved to archive. Then, they needed to manually move

them.

The addition of Archive Access and Deep Archive Access tiers to

S3 Intelligent-Tiering further enhances the first and only storage

class in the cloud that provides dynamic tiering and pricing. S3

Intelligent-Tiering now provides automatic tiering and dynamic

pricing across four different access tiers (Frequent, Infrequent,

Archive, and Deep Archive). Customers using S3 Intelligent-Tiering

can save up to 95% on storage that is automatically moved from

Frequent access to Deep Archive for 180 days or more. Once a

customer has activated one or both of the archive access tiers, S3

Intelligent-Tiering will automatically move objects that have not

been accessed for 90 days to the Archive access tier, and after 180

days to the Deep Archive access tier. S3 Intelligent-Tiering

supports features like S3 Inventory to report on the access tier of

objects, and S3 Replication to replicate data to any AWS Region.

There are no retrieval fees when using the S3 Intelligent-Tiering

and no additional tiering fees when objects are moved between

access tiers. S3 Intelligent-Tiering with the new archive access

tiers are available today in all AWS Regions. To get started with

Amazon S3 Intelligent-Tiering visit:

https://aws.amazon.com/s3/storage-classes/

Amazon S3 Replication extends the ability to replicate data

to multiple destinations within the same AWS Region or across

different AWS Regions Customers use S3 Replication today to

create a replica copy of their data within the same AWS Region or

across different AWS Regions for compliance requirements,

low-latency performance, or data sharing across accounts. Some

customers also need to replicate the actual data to multiple

destinations (S3 buckets in the same AWS Region or S3 buckets in

multiple Regions) to meet data sovereignty requirements, to support

collaboration between geographically-distributed teams, or to

maintain the same data sets in multiple AWS Regions for resiliency.

To accomplish this today, customers must build their own

multi-destination replication service by monitoring S3 events to

identify any newly-created objects. They then spread out these

events into multiple queues, invoke AWS Lambda functions to copy

objects to each destination S3 bucket, track the status of each API

call, and aggregate the results. Customers also need to monitor and

maintain these systems, which create added expense and operational

overhead.

With S3 Replication (multi-destination), customers no longer

need to develop their own solutions for duplicating data across

multiple AWS Regions. Customers can now use S3 Replication to

replicate data to multiple buckets within the same AWS Region,

across multiple AWS Regions, or a combination of both using the

same policy-based, managed solution with events and metrics to

monitor their data replication. For example, a customer can now

easily replicate data to multiple S3 buckets in different AWS

Regions – one for primary storage, one for archiving, and one for

disaster recovery. Customers can also distribute data sets and

updates to all AWS Regions for low-latency performance. With S3

Replication (multi-destination), customers can also specify

different storage classes for different destinations to save on

storage costs and meet data compliance requirements (e.g. customers

can use the S3 Intelligent-Tiering storage class for data in two

AWS Regions and have another copy in S3 Glacier Deep Archive for a

low-cost replica). S3 Replication (multi-destination) fully

supports existing S3 Replication functionality like Replication

Time Control to provide predictable replication time backed by a

Service Level Agreement to meet their compliance or business

requirements. Customers can also monitor the status of their

replication with Amazon CloudWatch metrics, events, and the

object-level replication status field. S3 Replication

(multi-destination) can be configured using the S3 management

console, AWS CloudFormation, or via the AWS CLI or AWS SDK. To get

started with S3 Replication (multi-destination) visit:

https://aws.amazon.com/s3/features/replication/

“More data will be created in the next three years than was

created over the past 30 years,” said Mai-Lan Tomsen Bukovec, Vice

President, Storage, at AWS. “The cloud is a big part of why

developers and companies are generating and retaining so much data,

and data storage is in need of reinvention. Today’s announcements

reinvent storage by building a new SAN for the cloud, automatically

tiering customers’ vast troves of data so they can save money on

what’s not being accessed often, and making it simple to replicate

data and move it around the world as needed to enable customers to

manage this new normal more effectively.”

Teradata is the cloud data analytics platform company, solving

the world’s most complex data challenges at scale. “When your focus

is analyzing the world’s data in real-time, having the right

balance of price and performance is critical—for our business and

our end-customers,” said Dan Spurling, SVP Engineering, Teradata.

“With the release of Gp3, Teradata AWS customers will experience

improved performance and throughput, allowing them to drive

increased analytics at scale. With the significant improvements in

Gp3 over Gp2, we expect 4x higher throughput with fewer EBS volumes

per instance; enabling our customers to receive increased

performance and improved instance level availability.”

Embark is building self-driving truck technology to make roads

safer and transportation more efficient. “We use S3

Intelligent-Tiering to store logs from our fleet of self-driving

trucks. These logs contain petabytes of data from the sensors on

our vehicle such as cameras and LiDARS, while also storing control

signals, and system logs that we need to perfectly reconstruct

everything that happened on and around our vehicles at any point in

time. Keeping all of this data is critical to our business,” said

Paul Ashbourne, Head of Infrastructure, Embark. “Our teams

frequently access recently-collected data for their analyses, but

over time, most of this data gets colder and subsets of data are

not accessed for months at a time. It’s important that we continue

to save older data in case we need to analyze it again, but doing

so can be costly. S3 Intelligent-Tiering is perfect for us because

it automatically optimizes our storage costs based on individual

object access patterns. With the two new Archive Access tiers, we

save even more when our fleet log data is rarely accessed. All of

this happens seamlessly without our engineering team having to

build or manage any custom storage monitoring systems. With S3

Intelligent-Tiering, everything just works, and we can focus less

time managing our storage and more time on research and

development.”

Zalando is Europe’s leading online platform for fashion and

lifestyle with over 35 million active customers. "We built a 15 PB

data lake on Amazon S3 which has allowed employees to act on and

analyze historical sales and web tracking data that they previously

wouldn’t have had access to," said Max Schultze, Lead Data

Engineer, Zalando. "By using S3 Intelligent-Tiering, we were able

to save 37% of our yearly storage costs for our data lake, as it

automatically moved objects between a Frequent Access and

Infrequent Access tier. We are looking forward to the new S3

Intelligent-Tiering Archive Access tiers to save even more on

objects that are not accessed for long periods of time.”

SmugMug+Flickr is the world's largest and most influential

photographer-centric platform. “We're new to S3 Replication and

have used Amazon S3 since day one. S3 Replication for multiple

destinations delivers awesome options for our global data

handling," said Andrew Shieh, Director of Engineering/Operations,

at SmugMug+Flickr. “We can now leverage S3 Replication in new ways,

planning optimized replication strategies using our existing S3

Object tags. S3 Replication support for multiple destinations

handles the heavy lifting, so we can spend more of our operational

and development time thrilling our customers. Our petabytes of data

in S3, fully managed by a few lines of code, are at the heart of

our business. These continual improvements to S3 keep SmugMug and

Flickr growing together with our partners at AWS.”

About Amazon Web Services

For 14 years, Amazon Web Services has been the world’s most

comprehensive and broadly adopted cloud platform. AWS offers over

175 fully featured services for compute, storage, databases,

networking, analytics, robotics, machine learning and artificial

intelligence (AI), Internet of Things (IoT), mobile, security,

hybrid, virtual and augmented reality (VR and AR), media, and

application development, deployment, and management from 77

Availability Zones (AZs) within 24 geographic regions, with

announced plans for 12 more Availability Zones and four more AWS

Regions in Indonesia, Japan, Spain, and Switzerland. Millions of

customers—including the fastest-growing startups, largest

enterprises, and leading government agencies—trust AWS to power

their infrastructure, become more agile, and lower costs. To learn

more about AWS, visit aws.amazon.com.

About Amazon

Amazon is guided by four principles: customer obsession rather

than competitor focus, passion for invention, commitment to

operational excellence, and long-term thinking. Customer reviews,

1-Click shopping, personalized recommendations, Prime, Fulfillment

by Amazon, AWS, Kindle Direct Publishing, Kindle, Fire tablets,

Fire TV, Amazon Echo, and Alexa are some of the products and

services pioneered by Amazon. For more information, visit

amazon.com/about and follow @AmazonNews.

View source

version on businesswire.com: https://www.businesswire.com/news/home/20201201006062/en/

Amazon.com, Inc. Media Hotline Amazon-pr@amazon.com

www.amazon.com/pr

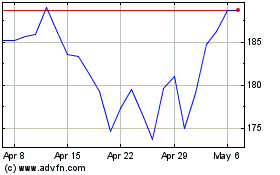

Amazon.com (NASDAQ:AMZN)

Historical Stock Chart

From Aug 2024 to Sep 2024

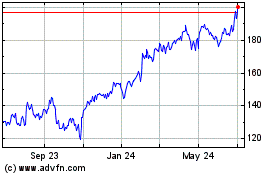

Amazon.com (NASDAQ:AMZN)

Historical Stock Chart

From Sep 2023 to Sep 2024