Thales’s Friendly Hackers Unit Invents Metamodel to Detect AI-generated Deepfake Images

November 20 2024 - 3:00AM

Business Wire

- As part of the challenge organised by France's Defence

Innovation Agency (AID) to detect images created by today’s AI

platforms, the teams at cortAIx, Thales’s AI accelerator, have

developed a metamodel capable of detecting AI-generated

deepfakes.

- The Thales metamodel is built on an aggregation of models, each

of which assigns an authenticity score to an image to determine

whether it is real or fake.

- Artificially generated AI image, video and audio content is

increasingly being used for the purposes of disinformation,

manipulation and identity fraud.

Artificial intelligence is the central theme of this year’s

European Cyber Week from 19-21 November in Rennes, Brittany. In a

challenge organised to coincide with the event by France's Defence

Innovation Agency (AID), Thales teams have successfully developed a

metamodel for detecting AI-generated images. As the use of AI

technologies gains traction, and at a time when disinformation is

becoming increasingly prevalent in the media and impacting every

sector of the economy, the deepfake detection metamodel offers a

way to combat image manipulation in a wide range of use cases, such

as the fight against identity fraud.

This press release features multimedia. View

the full release here:

https://www.businesswire.com/news/home/20241120808818/en/

(c)Thales

AI-generated images are created using AI platforms such as

Midjourney, Dall-E and Firefly. Some studies have predicted that

within a few years the use of deepfakes for identity theft and

fraud could cause huge financial losses. Gartner has estimated that

around 20% of cyberattacks in 2023 likely included deepfake content

as part of disinformation and manipulation campaigns. Their report1

highlights the growing use of deepfakes in financial fraud and

advanced phishing attacks.

“Thales’s deepfake detection metamodel addresses the problem of

identity fraud and morphing techniques,”2 said Christophe

Meyer, Senior Expert in AI and CTO of cortAIx, Thales’s AI

accelerator. “Aggregating multiple methods using neural

networks, noise detection and spatial frequency analysis helps us

better protect the growing number of solutions requiring biometric

identity checks. This is a remarkable technological advance and a

testament to the expertise of Thales’s AI researchers.”

The Thales metamodel uses machine learning techniques, decision

trees and evaluations of the strengths and weaknesses of each model

to analyse the authenticity of an image. It combines various

models, including:

- The CLIP method (Contrastive Language-Image Pre-training)

involves connecting image and text by learning common

representations. To detect deepfakes, the CLIP method analyses

images and compares them with their textual descriptions to

identify inconsistencies and visual artefacts.

- The DNF (Diffusion Noise Feature) method uses current

image-generation architectures (called diffusion models) to detect

deepfakes. Diffusion models are based on an estimate of the amount

of noise to be added to an image to cause a “hallucination”, which

creates content out of nothing, and this estimate can be used in

turn to detect whether an image has been generated by AI.

- The DCT (Discrete Cosine Transform) method of deepfake

detection analyses the spatial frequencies of an image to spot

hidden artefacts. By transforming an image from the spatial domain

(pixels) to the frequency domain, DCT can detect subtle anomalies

in the image structure, which occur when deepfakes are generated

and are often invisible to the naked eye.

The Thales team behind the invention is part of cortAIx, the

Group’s AI accelerator, which has over 600 AI researchers and

engineers, 150 of whom are based at the Saclay research and

technology cluster south of Paris and work on mission-critical

systems. The Friendly Hackers team has developed a toolbox called

BattleBox to help assess the robustness of AI-enabled systems

against attacks designed to exploit the intrinsic vulnerabilities

of different AI models (including Large Language Models), such as

adversarial attacks and attempts to extract sensitive information.

To counter these attacks, the team develops advanced

countermeasures such as unlearning, federated learning, model

watermarking and model hardening.

In 2023, Thales demonstrated its expertise during the CAID

challenge (Conference on Artificial Intelligence for Defence)

organised by the French defence procurement agency (DGA), which

involved finding AI training data even after it had been deleted

from the system to protect confidentiality.

About Thales

Thales (Euronext Paris: HO) is a global leader in advanced

technologies specialising in three business domains: Defence &

Security, Aeronautics & Space and Cybersecurity & Digital

Identity.

The Group develops products and solutions that help make the

world safer, greener and more inclusive.

Thales invests close to €4 billion a year in Research &

Development, particularly in key innovation areas such as IA,

cybersecurity, quantum technologies, cloud technologies and 6G.

Thales has 81,000 employees in 68 countries. In 2023, the Group

generated sales of €18.4 billion.

PLEASE VISIT

Thales Group Defence

Thales

Developing AI systems we can all trust | Thales Group

1 2023 Gartner Report on Emerging Cybersecurity Risks.

2 Morphing involves gradually changing one face into another in

successive stages by modifying visual features to create a

realistic image combining elements of both faces. The final result

looks like a mix of the two original appearances.

View source

version on businesswire.com: https://www.businesswire.com/news/home/20241120808818/en/

Marion Bonnet Thales PR Manager Marion.bonnet@thalesgroup.com

+33660384892

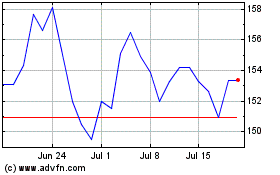

Thales (EU:HO)

Historical Stock Chart

From Nov 2024 to Dec 2024

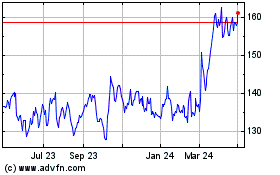

Thales (EU:HO)

Historical Stock Chart

From Dec 2023 to Dec 2024