By John D. Stoll

Facebook Inc.'s business model isn't a secret.

More people talking gets more people scrolling and clicking. The

buzzier the posts, the longer users stick around. That means more

eyeballs and more data on the users behind them. And eyeballs and

data are the opiate of advertisers big and small.

It is a rewarding formula, earning the social-media company $5.2

billion in the second quarter alone. It is also the heart of

Facebook's dilemma.

Provocative speech, the kind that nestles up to any line

Facebook draws, is like catnip to users, but the company's

long-term profitability depends on policing it without abusing

users' trust.

"You know, one person's opinion, one person's free expression

can be another person's hate," Facebook's Chief Operating Officer

Sheryl Sandberg told me recently. She said she believes the

company's standards are tough, but will never be tough enough for

some people.

How does the company show it respects the blurry line between

free speech and hate speech without making portions of Facebook's 3

billion users feel like their views are taboo or making another

swath of users feel like it doesn't care about the pain that some

posts cause them?

This is a challenge faced across social media. A speech by

founder Mark Zuckerberg last October at Georgetown's Institute of

Politics and Public Service made clear Facebook errs on the side of

free expression.

"Giving more people a voice gives power to the powerless, and it

pushes society to get better over time," he said.

Many say that's too hands-off, and the cautious approach tends

to foster the spread of misinformation and divisiveness. Critics

have suggested Mr. Zuckerberg and Ms. Sandberg are more interested

in protecting their pocketbooks than civil rights.

Ms. Sandberg, not surprisingly, doesn't see it that way.

"Without meeting our responsibility, we're not going to build our

business."

During our 40-minute Zoom call, Ms. Sandberg listed metrics

supporting her case. Facebook on its own now finds 95% of the hate

speech that is taken down, compared to 24% a couple years ago.

Millions of pieces of content are removed by

artificial-intelligence tools.

"If you look at how we do our jobs and compare it to four years

ago -- Mark, myself, all of our senior leaders...we all spend a lot

more of our time on the protection of the community than we

did."

Building a business is a juggling act. Yes, lowering the

temperature in people's news feeds helps us feel even better about

Facebook. But let's remember the posts that stir the most

controversy are a key ingredient to success.

Facebook's dominance not only hinges on visits, it depends on

keeping ahead of competition constantly trying to topple it. It

costs billions of dollars and thousands of people to outrun TikTok,

Twitter, Snapchat and YouTube.

How would shareholders feel if Ms. Sandberg turned too many

employees into content cops? Every dollar or minute spent to boost

security or user safety could be a dollar or minute taken from some

other business initiative.

"There is a resource trade-off," she said. "We hire an engineer,

we can put them on an ads program to build more ads and sell more

ads. We can put them on safety. We can put them on security."

Ms. Sandberg addressed several topics during our call. We caught

up on her Lean In foundation, which published findings showing how

disadvantaged Black women are in career advancement.

We also talked about potential next career steps.

"Nope," she is "not interested" in the Senate seat that Kamala

Harris would vacate if elected vice president in November. Nor is

the former Clinton administration member, who served as chief of

staff to former Treasury Secretary Larry Summers, interested in a

role in Joe Biden's White House, should he beat President Donald

Trump.

"I really love my job," she said. But, "every day is not

easy."

Last month, a civil-rights audit conducted by an independent

attorney but commissioned by Facebook criticized the company for

being "too reactive and piecemeal" in responses to hate speech, and

for presiding over a "seesaw of progress and setbacks." Ms.

Sandberg chaired the task force that conducted the audit.

A major complaint in the report: Facebook's handling of Mr.

Trump's posts about protestors and voting that have been

characterized as racist or misleading. Ms. Sandberg said the

company does not profit from or incentivize provocative content,

but it is deliberately slow to hit the delete button.

"We get accused from conservatives of being anti-conservative,"

Ms. Sandberg said. "They look at us and they see, you know, liberal

Silicon Valley company." What's the answer? "We're going to be very

clear about what our rules are, very clear and working to apply

them as even-handed as possible."

If Mr. Trump violates the company's voluminous list of community

standards (including hate speech and misinformation), his posts

come down. But penalizing a person as polarizing as Mr. Trump ain't

easy.

Mr. Zuckerberg said in a 2018 memo to staff that Facebook's

audience, like those who view cable news shows or peruse tabloids,

"engage disproportionately with more sensationalist and provocative

content." Sure, it undermines the quality of the discourse but "as

a piece of content gets close to that line, people will engage with

it more on average -- even when they tell us afterwards they don't

like the content."

Discontent with Facebook's approach grew as Black Lives Matter

demonstrations accelerated. Big companies, including Unilever PLC

and Coca-Cola Co., boycotted advertising on Facebook and other

platforms citing divisiveness and hate speech.

"It's important to us that the most important brands in the

world feel comfortable affiliating with us," Ms. Sandberg said.

Facebook tightened controls, but didn't overhaul its policy. "We

aren't going to set up our content standards because people are

protesting us or boycotting us, or for dollars."

The boycott was short-lived, but it raises a long-term question.

Will patience with Facebook eventually run out?

The next big test will be the 2020 election.

"We all know that there's a lot at stake for this election. Full

stop," Ms. Sandberg said.

Ms. Sandberg said Facebook is committed to being "much more

proactive around pushing out accurate information in this

election." The company's efforts to detect misinformation have been

sharpened during the pandemic, she said. She added that Facebook

blocked bad actors in "hundreds of elections around the world" in

2018.

"When people talk about things Facebook missed in an election

and get upset at us for things they're almost always talking about

2016." She's right, but that doesn't change the fact Facebook is

still paying for that.

The company has also been criticized for the alleged role

misinformation campaigns played ahead of the historic Brexit vote

and was also faulted for allegedly allowing the spread of false

stories during recent U.K. elections.

For 2020, Facebook is once again pushing for voters to register

via its site; building a voter information center; and turning over

questionable content to a network of third-party fact checkers

sanctioned by Poynter, a journalism research organization. Posts

can be labeled false or potentially misleading.

"We take our responsibility very seriously, and it's our job to

show up at work every day, trying to stop anything bad." But of

course, "the bad guys will always try to get ahead."

Facebook's task is to continue working to convince it is, in

fact, the good guy.

Write to John D. Stoll at john.stoll@wsj.com

(END) Dow Jones Newswires

August 21, 2020 11:39 ET (15:39 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

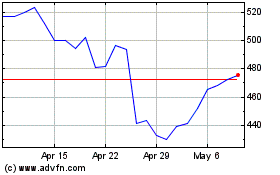

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Apr 2024 to May 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From May 2023 to May 2024