Facebook's Deepfake Video Ban Permits Some Altered Content

January 07 2020 - 3:38AM

Dow Jones News

By Betsy Morris

Facebook Inc. is banning videos that have been manipulated using

advanced tools, though it won't remove all doctored content, as the

social media giant tries to combat disinformation without stifling

speech.

The policy unveiled Monday by Monika Bickert, Facebook's vice

president for global policy management, is the company's most

concrete step to fight the spread of so called deepfakes on its

platform.

Deepfakes are images or videos that have been manipulated

through the use of sophisticated machine-learning algorithms,

making it nearly impossible to differentiate between what is real

and what isn't.

"While these videos are still rare on the internet, they present

a significant challenge for our industry and society as their use

increases," Ms. Bickert said in a blog post.

Facebook said it would remove or label misleading videos that

had been edited or manipulated in ways that would not be apparent

to the average person. That would include removing videos in which

artificial intelligence tools are used to change statements made by

the subject of the video or replacing or superimposing content.

Social media companies have come under increased pressure to

stamp out false or misleading content on their sites ahead of this

year's American presidential election.

Late last year, Alphabet Inc.'s Google updated its political

advertisement policy and said it would prohibit the use of

deepfakes in political and other ads. In November, Twitter said it

was considering identifying manipulated photos, videos and audio

shared on its platform.

Facebook's move could also expose it to new controversy. It said

its policy banning deepfakes "does not extend to content that is

parody or satire, or video that has been edited solely to omit or

change the order of words." That could put the company in the

position of having to decide which videos are satirical, which

aren't and where to draw the line on what doctored content will be

taken down.

Facebook has already been trying to walk a thin line on other

content moderation issues ahead of this year's presidential

election. The company, unlike some rivals, has said it wouldn't

block political advertisements even if they contain inaccurate

information. That policy drew criticism from some politicians,

including Sen. Elizabeth Warren, a Democratic contender for the

White House. Facebook later said it would ban ads if they

encouraged violence.

The new policy also marks the latest front in Facebook's battle

against those who use artificial intelligence to spread messages on

its site. Last month, the company took down hundreds of fake

accounts that used AI-generated photos to pass them off as

real.

In addition to Facebook's latest policy on deepfakes, which

generally rely on AI tools to mask that the content is fake, the

company also will continue to screen for other misleading content.

It will also review videos that have been altered using less

sophisticated methods and place limits on such posts.

The Facebook ban wouldn't have applied to an altered video of

House Speaker Nancy Pelosi. That video of a speech by Mrs.

Pelosi--widely shared on social media last year--was slowed down

and altered in tone, making her appear to slur her words. Facebook

said the video didn't qualify as a deepfake because it used regular

editing, though the company still limited its distribution because

of the manipulation.

"If we simply removed all manipulated videos flagged by

fact-checkers as false, the videos would still be available

elsewhere on the internet or social media ecosystem. By leaving

them up and labeling them as false, we're providing people with

important information and context," Ms. Bickert said.

Jeff Horwitz contributed to this article.

Write to Betsy Morris at betsy.morris@wsj.com

(END) Dow Jones Newswires

January 07, 2020 03:23 ET (08:23 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

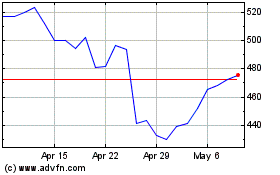

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Mar 2024 to Apr 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Apr 2023 to Apr 2024