By Keach Hagey and Jeff Horwitz

Parler launched in 2018 as a freewheeling social-media site for

users fed up with the rules on Facebook and Twitter, and it quickly

won fans from supporters of President Trump. On Monday, it went

dark, felled by blowback over its more permissive approach.

Amazon.com Inc. abruptly ended web-hosting services to the

company, effectively halting its operations, prompting Parler to

sue Amazon in Seattle federal court. Other tech partners also

acted, crippling operations.

Driving the decision was the role of Parler in last week's mob

attack on the U.S. Capitol. On the afternoon of the riot, Amazon

warned executives from Parler it had received reports the

social-media platform was hosting "inappropriate" content, and that

Parler had 24 hours to address it.

"We have been appropriately addressing this type of content and

actively working with law enforcement for weeks now," Parler policy

chief Amy Peikoff told Amazon a few hours later in an email

reviewed by The Wall Street Journal.

Amazon wrote back Thursday: "Please consider it resolved." The

email gave Parler executives confidence that their moderation

system, however strained, was acceptable to its tech partner.

It wasn't. Within two days of that correspondence, Amazon

announced it was booting Parler from its cloud platform, joining

Alphabet Inc.'s Google and Apple Inc. in pulling the plug on the

service. Other vendors turned their backs, too: Twilio Inc. cut off

Parler's two-factor authentication system, preventing it from

weeding out fake new accounts, and Okta Inc. locked Parler out of

key enterprise software tools.

Parler had become a pariah for serving as a hub for people who

organized, participated in or celebrated the storming of the

Capitol that left five dead, as well as a forum for some who have

posted about future violent actions around the coming

inauguration.

In some Parler posts flagged by tech companies as examples of

inadequate moderation, users posted about "poisoning the water

supply" in minority neighborhoods and killing their alleged

enemies.

Executives at Parler said posts inciting violence violate its

rules, although acknowledged they are aware such content was on the

platform and others in the run-up to the Capitol attack. Its team

of moderators -- mainly volunteers who receive training on what

content should be removed -- has been overwhelmed and often faced

large backlogs in handling offending posts as the service added

users by the thousands in recent months, they said.

Parler Chief Executive John Matze said the company had offered

to use algorithms to help moderators identify and weed out violent

content, tools employed by larger social networks that Parler

executives have previously resisted.

Parler is in a frenzied push to find new vendors to host its

services so that it can resume operations, a process executives

said could last at least a week. Backed by investors such as

Republican donor Rebekah Mercer, Parler has the resources to

restore service outside of Amazon and plenty of cash on hand, said

Mr. Matze. But he acknowledged that a sustained loss of service

could undermine the platform's future.

"We had regular conversations with them, and none of them gave

us any indications that there were deep problems in the

relationship," said Jeffrey Wernick, Parler's chief operating

officer.

The company's looser policies on content moderation have

attracted large groups of supporters of President Trump who use it

to push their claims that the 2020 election was stolen,

particularly as larger platforms like Twitter and Facebook have

cracked down on this kind of speech. The company says it has 15

million users.

Social-media companies of all stripes have struggled to strike

the right balance between free speech and content moderation.

Liberals have generally argued that the tech platforms should be

more aggressive in policing hate speech, while conservatives have

complained that Big Tech is biased against their point of view.

Parler's troubles show the power a small number of tech

companies have over online discourse. Moves made by platforms

including Twitter and Facebook to ban President Trump, citing rules

prohibiting content that incites violence, have the potential to

reshape their businesses.

Before Parler was shut down, users alerted followers to reach

them on other platforms, including those on Gab, or on messaging

apps such as Telegram. Monday morning, Parler's website,

parler.com, was down and users could no longer access news feeds or

make new posts.

Hours later, Parler filed its lawsuit, alleging it was kicked

off Amazon's cloud servers due to "political animus" and for

anticompetitive reasons. An Amazon spokesman said the lawsuit's

claims had no merit and that it respects Parler's right to

determine what content it will allow.

Amazon explained its decision to shut off services to Parler by

citing 98 instances of violent content on the platform that it said

violated its rules. Parler said it removed all 98 items, in some

instances before Amazon reported them.

Determining whether discourse on Parler or the platform's

moderation of it was fundamentally worse than on other forums is

"kind of an impossible question, empirically and philosophically,"

said Evelyn Douek, a Harvard Law School lecturer who studies

content moderation. While a case can be made for app stores and

other internet infrastructure providers taking action against

platforms that don't take moderation requirements seriously, Ms.

Douek said, the speed of tech companies' action against Parler

raised questions.

"If 98 is the threshold, has AWS looked at the rest of the

internet," she said. "Or at Amazon?"

Daniel J. Jones, president of nonprofit research group Advance

Democracy, said the organization found numerous Parler users

calling for violence on Jan. 6.

"Far-right extremists and conspiratorial groups, such as QAnon,

specifically flocked to Parler because of the lack of moderation

and guidelines," he said.

Parler relies on a volunteer community of jurors to moderate

content. After some experimentation with its initial rules, Parler

settled on generally allowing users to engage in any

constitutionally protected speech. There would be no fact-checking,

no restrictions on offensive language and no prohibition on gory or

adult content, so long as it was tagged "sensitive" by the creator,

executives said. Threats, spam and criminal activity weren't

allowed, they said.

The first big test of the system came in November, when

conservatives upset about the outcome of the presidential election

flocked to Parler, doubling the company's user base to more than 10

million within a few days. The new users brought a host of problems

such as spam and pornography, executives said, prompting complaints

from larger tech platforms. The volunteer jury system seemed to

work, they said.

To keep up, Parler tripled its volunteer moderator staff from

200 to 600 over the past two months and started paying them monthly

stipends. It also has been hiring full-time moderators in recent

weeks.

The Journal viewed some comments flagged for review under

Parler's moderation system. The content included spam ads for Trump

commemorative coins, fake accounts and incitement to violence.

"Threatens to kill Pence," one user noted about a post. Another,

flagging a call to kill Facebook CEO Mark Zuckerberg and Twitter

CEO Jack Dorsey, said: "You can't allow this or we won't have a

voice."

Mr. Matze and other Parler executives noted that content calling

for the overthrow of the U.S. government remains on other

platforms. The Wall Street Journal has previously written about a

private Facebook group called "the 2020 Civil War," where users

discussed plans to march on the White House on Inauguration

Day.

Facebook said on Monday it was removing content from its

flagship platform and Instagram containing the phrase "stop the

steal" -- a reference to claims that Mr. Trump would have won the

2020 election if not for widespread election fraud.

On Saturday, "HangMikePence" was trending on Twitter, which

reflected chants from protesters at the Capitol who were

disappointed with the vice president's decision to proceed with

certifying election results. Twitter said Saturday evening that it

blocked the phrase and other similar ones from trending to promote

healthy discussions on the platform.

On Dec. 14, Amazon flagged four posts to Parler, saying the

content "clearly encourages or incites people to commit violence

against others, " which was a violation of its terms of service,

according to an email reviewed by the Journal. One of the posts

calling for violence was from Nov. 16. Another, from early

December, included comments such as: "My wishes for a racewar have

never been higher. I find myself thinking about killing n -- s and

jews more and more often."

Ms. Peikoff said Parler removed the content immediately and

believed Amazon was satisfied. One person familiar with Amazon's

thinking said that correspondence began a process of enforcement

that ended with Amazon cutting ties to the social network.

Parler executives said before the Capitol riot there was a

growing number of calls for violence on the platform, as well on

the rest of the internet.

Ms. Peikoff said she instructed moderators who had been hunting

for spam to also look for incitements of violence and report it to

law enforcement when appropriate. "I was concerned that there was

actually going to be some sort of violence on the 6th," she

said.

The day of the riot, Parler was among the social-media networks,

including Facebook, YouTube and Twitter, that Trump supporters

seeking to contest the election results used to organize the

protests and celebrate the attack, according to researchers and

analysts who study extremism and disinformation, as well as posts

reviewed by the Journal.

Unlike those services, Parler had actively courted users

disenchanted with the major platforms. Mr. Matze acknowledges that

Parler's moderators fell behind, given the surge of activity last

week Wednesday, by around 20,000 reports -- a roughly two-day

backlog.

By the next day, advocates such as Sleeping Giants, a Twitter

account created to press advertisers to stop supporting what they

consider hate speech, and tech companies' employees were putting

pressure on Google, Apple, Amazon and others to sever ties with

Parler.

In suspending Parler from the Play Store, Google said the

company needed to implement a robust moderation system to handle

"egregious" content.

Apple told Parler it received numerous complaints that the

platform had been used to organize the assault on the Capitol and

was being used to organize future violence. Its list of evidence

began with a link to Sleeping Giants' feed, which had screenshots

of posts from influencers such as pro-Trump lawyer L. Lin Wood

calling for firing squads to shoot Mr. Pence to an account with few

followers calling for people to bring their weapons to the nation's

capital on Jan 19.

Parler executives said in interviews Saturday that all the posts

flagged by Apple and most of these accounts had been taken down.

Mr. Wood told the Journal Sunday his post was meant as rhetorical

hyperbole.

--Georgia Wells contributed to this article.

Write to Keach Hagey at keach.hagey@wsj.com and Jeff Horwitz at

Jeff.Horwitz@wsj.com

(END) Dow Jones Newswires

January 11, 2021 20:43 ET (01:43 GMT)

Copyright (c) 2021 Dow Jones & Company, Inc.

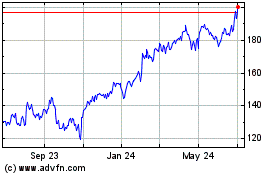

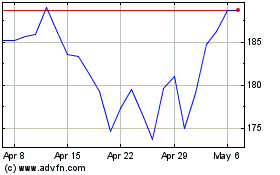

Amazon.com (NASDAQ:AMZN)

Historical Stock Chart

From Mar 2024 to Apr 2024

Amazon.com (NASDAQ:AMZN)

Historical Stock Chart

From Apr 2023 to Apr 2024