Facebook Boosts A.I. to Block Terrorist Propaganda

June 15 2017 - 1:29PM

Dow Jones News

By Sam Schechner

Under intense political pressure to better block terrorist

propaganda on the internet, Facebook is leaning more on artificial

intelligence.

The social-media firm said Thursday that it has expanded its use

of A.I. in recent months to identify potential terrorist postings

and accounts on its platform -- and at times to delete or block

them without review by a human. In the past, Facebook and other

tech giants relied mostly on users and human moderators to identify

offensive content. Even when algorithms flagged content for

removal, these firms generally turned to humans to make a final

call.

Companies have sharply boosted the volume of content they have

removed in the last two years, but these efforts haven't proven

effective enough to tamp down a groundswell of criticism from

governments and advertisers. They have accused Facebook, Google

parent Alphabet Inc. and others of complacency over the

proliferation of inappropriate content -- in particular, posts or

videos deemed as extremist propaganda or communication -- on their

social networks.

In response, Facebook disclosed new software that it says it is

using to better police its content. One tool, in use for several

months now, combs the site for known terrorist imagery, like

beheading videos, in order to stop them from being reposted,

executives said Thursday. Another set of algorithms attempts to

identify -- and sometimes autonomously block -- propagandists from

opening new accounts after they have already been kicked off the

platform. Another experimental tool uses A.I. that has been trained

to identify language used by terrorist propagandists.

"When it comes to imagery related to terrorism, context is

everything," said Monika Bickert, Facebook's head of global policy

management. "For us, technology is an important part of flagging

it. People are invaluable in understanding that context."

Facebook says that it sends all ambiguous removals to humans to

review -- and is hiring large numbers of new content moderators to

go through it. But the firm's new moves reflect a growing

willingness to trust machines when it comes to thorny tasks like

distinguishing inappropriate content from satire or news coverage

-- something firms resisted after a spate of attacks just two years

ago as a potential threat to free speech.

One factor in the changed approach, executives say, has been the

improved ability of algorithms to identify unambiguously terrorist

content in some cases, while referring other content for human

review.

"Our A.I. can know when it can make a definitive choice, and

when it can't make a definitive choice," said Brian Fishman, lead

policy manager for counterterrorism at Facebook. "That's something

new."

Another factor in the fresh A.I. push: intense pressure from

advertisers and governments, particularly in Europe. British Prime

Minister Theresa May ratcheted up complaints this month in the wake

of a series of deadly terror attacks in the U.K. Just days before a

general election, meanwhile, the campaigns for both of Britain's

two main parties pulled political ads from Alphabet's YouTube

video-sharing site after being alerted those ads were appearing

before extremist content.

Germany earlier this year proposed a bill that could fine firms

up to EUR50 million ($56 million) for failing to remove fake news

or hate speech -- including terrorist content. The U.K. and France

published a counterterrorism action plan this week that calls on

tech giants to go beyond deleting content that is flagged, and

instead identify it beforehand to prevent publication.

"There have been promises made. They are insufficient," French

President Emmanuel Macron said Tuesday.

Facebook has already rolled out software to identify other

questionable content such as child pornography and fake news

stories. Ahead of French and German elections this year, the

company began tagging "disputed" stories when outside news

organizations ruled them as false.

Social media firms including Facebook, Yahoo Inc. and Twitter

Inc. are adamant that they want to stamp out terrorism on their

platforms -- and already do a lot to remove such content. Twitter

says it is expanding its use of automated technology to combat

terrorist content, too. From July through December last year,

Twitter said internal tools flagged 74% of the 376,890 accounts it

removed.

YouTube says it is collaborating with the other social media

firms on a shared database of previously identified terrorist

imagery, which allows the companies to more quickly identify posts

that use them. But the company doesn't use technology to screen new

content for policy violations, saying computers lack the nuance to

determine whether a previously uncategorized video is

extremist.

"These are complicated and challenging problems, but we are

committed to doing better and being part of a lasting solution," a

YouTube spokesman said.

--Jack Nicas contributed to this article.

(END) Dow Jones Newswires

June 15, 2017 13:14 ET (17:14 GMT)

Copyright (c) 2017 Dow Jones & Company, Inc.

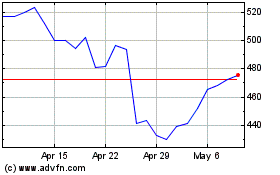

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Mar 2024 to Apr 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Apr 2023 to Apr 2024